Problem Overview and Definition

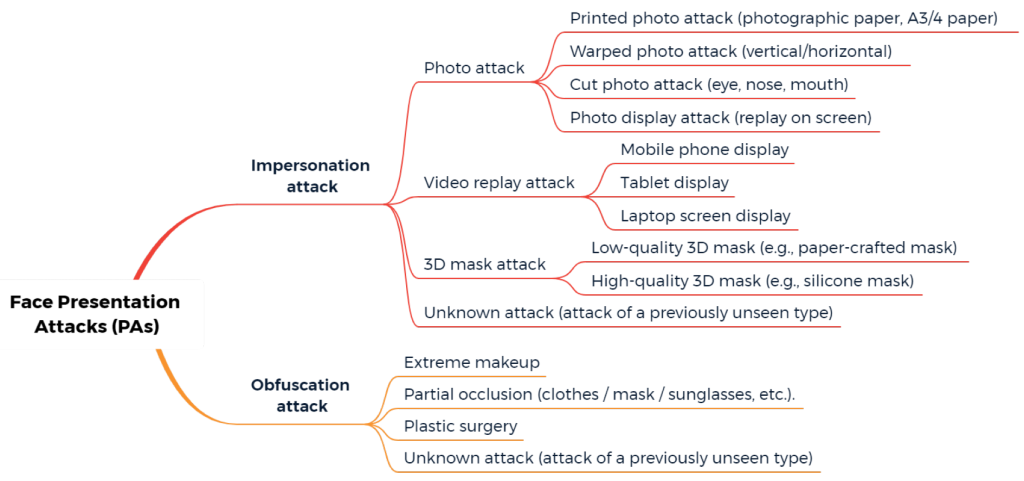

When it comes to liveness classification, biometric obfuscation is a malicious technique which allows a culprit to conceal their true identity from a biometric recognition system based on facial recognition, voice recognition, or other modalities. For this reason, types, countermeasures and challenges of antispoofing classify it as a potential attack tool.

Obfuscation attacks are close to Presentation Attacks (PAs) as they can employ similar tricks and tools: masks, digital alterations, makeup, and so on. However, PAs are aimed at impersonating a specific target to achieve a false acceptance, whereas obfuscation attacks are committed to conceal someone’s identity.

In facial antispoofing, obfuscation attacks involve falsifying, disguising, and even removing initial biometric traits to bypass an antispoofing system. The attack tools may vary, from simple facial occlusions like sunglasses to even getting facial plastic surgery. Face morphing can also technically be classified as an obfuscation attack, as it enables a culprit to hide behind someone else’s likeness and be successfully authenticated at the Automatic Border Control (ABC), among other situations.

Obfuscation attacks have been attempted for almost a century, if not longer. In 1933, Theodore Klutas, the head of the College Kidnappers, unsuccessfully tried to file down his fingerprints. Later, the practice was adopted by other Depression-era criminals: John Dillinger, Alvin Karpis, Fred Barker, and others. Recent notable cases include Orland Park’s bank robbery, a series of betting shop robberies in London, the "Immigrant Fingerprints" incident, and more.

Datasets

Two facial datasets were developed to neutralize the obfuscation threat.

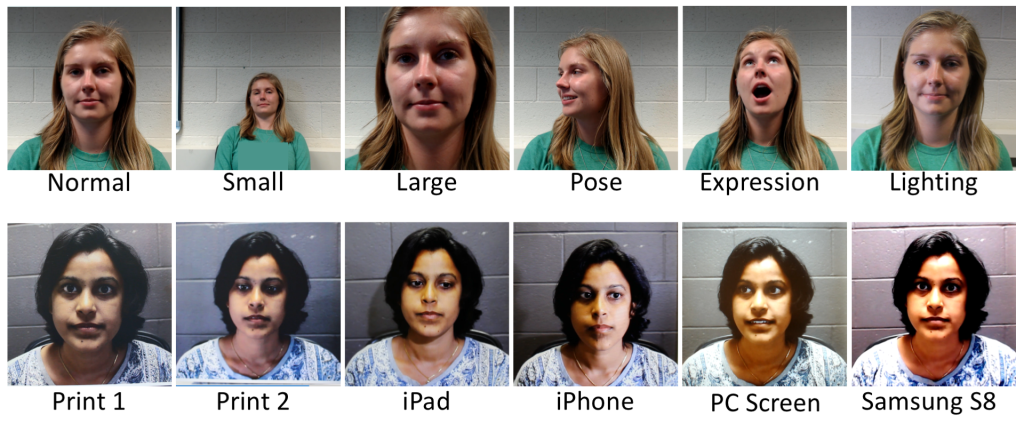

SiW-M

The SiW-M, or Spoof in the Wild database with Multiple Attack Types, is the most extensive dataset focused on obfuscation and impersonation. It contains a rich collection of genuine and spoofed videos featuring 165 volunteers. For each participant, 8 genuine and up to 20 spoof videos were made with proportionate gender and ethnic diversity in mind. The obfuscation part of the dataset focuses on makeup attacks.

HQ-WMCA

The HQ-WMCA, or High-Quality Wide Multi-Channel Attack database, is largely based on the WMCA dataset with the intent of further boosting its sample quality. It contains 2,904 videos featuring actual attack data with higher resolution and frame rate. An array of capturing methods includes Shortwave infrared (SWIR) spectrum sensors.

As for other biometric traits, obfuscation is reported to be extensively used in fingerprint attacks. However, there are no known fingerprint datasets that focus solely on this problem.

Types of Obfuscation Attacks

Next, let’s go over some examples of obfuscation attacks and examine how they work.

Extreme Makeup

The impact of makeup on facial recognition has been explored for over a decade. Experts point to Makeup Presentation Attacks, or M-PAs, as a potential threat — with the help of cosmetics, it is possible to change facial shape, conceal unique skin markings, remove wrinkles, and so on.

Makeup is divided into Light and Heavy types. The former is rarely ill-intended, as it serves as a beautification tool and highlights natural complexion, lip color, etc. The latter aims to look unnatural — for example, artistic makeup that drastically changes a person’s appearance.

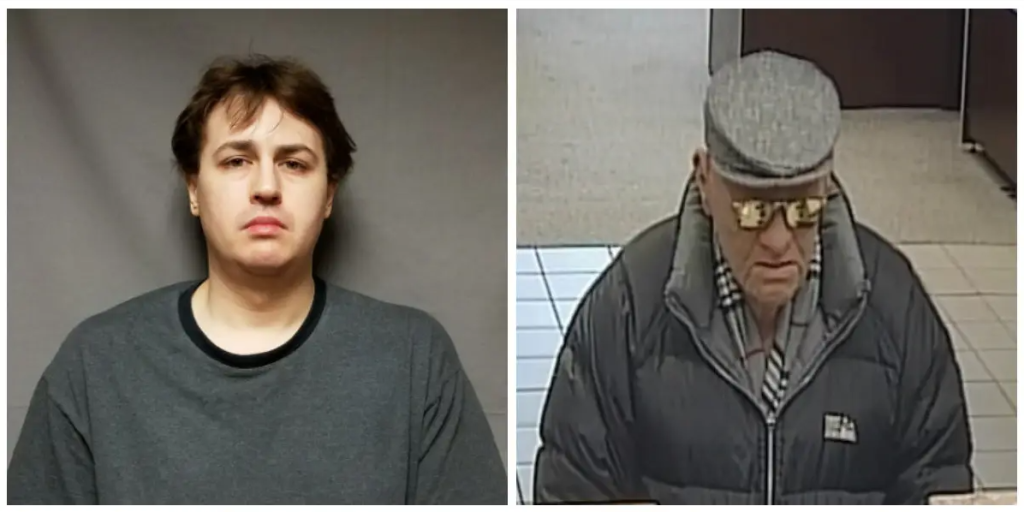

A separate type of makeup can be classified as Camouflaging, with CV Dazzle being the primary example. It was purposely developed to trick facial recognition for social protesters. It has even been proven effective when used against simpler police algorithms that compare a person’s image to a mugshot database — though more robust anti-spoofing solutions, especially based on passive liveness detection, can be immune to that tactic.

Partial Occlusion

Partial occlusions — which refer to visual obstructions of parts of the face — are a common issue in biometrics. They can disrupt recognition of face, voice, veins, fingerprints, and other traits. The most common occlusive facial items are masks, glasses, phones, hematomas, drinking bottles, and so on. They can either conceal a person's identity or hinder liveness detection.

They are divided into Systematic, Temporary, and Synthetic groups. The first implies that an occlusion is either constant or intensively recurring, such as beard or pigmentation. The second includes pose variations, a person covering their face with a hand, etc. The last type refers to digital hindrances, such as digital stickers or augmented reality (AR) filters. Illumination levels are also considered as a special type of occlusion.

Plastic Surgery

Facial surgical procedures have been in use for malicious purposes since the 1930s — a report titled Plastic Surgery and Crime was issued in 1935. Plastic surgery is divided into two types:

- Local surgery. Mostly a benign procedure that aims to remove unaesthetic blemishes — birthmarks, wrinkles, or scars.

- Global surgery. This type is resorted to whenever a patient’s appearance needs to be reconstructed, usually after extreme damage to the facial structure. However, the same procedure can be used to completely change a subject’s look.

Developing a surgery-detecting solution is problematic due to how difficult it is to collect training material: pre/after footage of patients is often protected by medical confidentiality.

Experiments

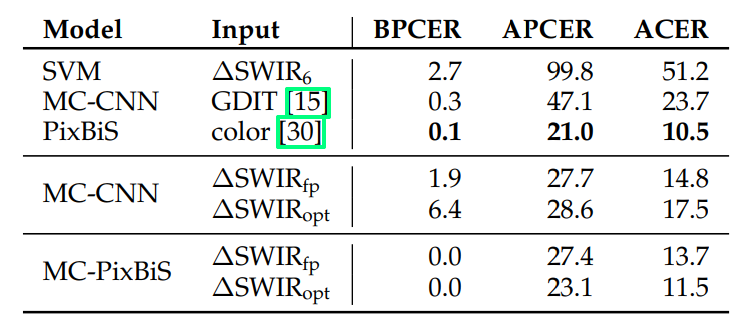

Obfuscation attacks are harder to detect than regular PAs, as they are more insidious in nature. While impersonation PAs tend to change the entire face of a culprit, obfuscation mostly focuses on altering a specific facial region. Pixel-Wise Binary Supervision (PixBiS) has proven to be the best model, as it analyzes color information and is capable of detecting such occlusions as glasses, wigs, and makeup. Its enhanced version MC-PixBiS + ∆SWIRₒpt could successfully detect tattoos.

Evasion and Obfuscation in Speaker Recognition

Automatic Speaker Verification is susceptible to obfuscation attacks as well. Two typical methods are prosody and synthetic manipulations: pitch alteration, usage of falsetto, bite blocking, voice muffling, simulated speech impediments, voice conversion, white noise, and so on. The problem is still poorly explored, while some ASV systems demonstrate a 20%-48% Equal Error Rate (EER).

Face Morphing and Gender Obfuscation

Face morphing can be used for gender obfuscation, as this study shows. In this case, a male/female face is used as an obfuscator, which provides to a target face the required gender information:

- Male + Female obfuscator = Average female face.

- Female + Male obfuscator = Average male face.

To achieve better results, morphed images should be neutral and taken against a homogenous background. Delaunay triangulation is applied to separate morphing faces into a group of triangles to achieve results of higher quality.

Obfuscation Attacks in Authorship Recognition

Authorship is defined by an obfuscator’s unique style, word choice, chord progressions, length of sentences, palette of colors, favored plots, and so on. A few methods exist to overcome this obfuscation type, so far focusing on linguistics only. Among them are stylometric analysis, synonym-based classifiers, and Artificial Neural Network (ANN) approaches focusing on lexical properties, alternative readability measure, character count, and other features.

References

- J. Dillinger is reported to be the first culprit who successfully used surgical obfuscation to conceal his fingerprints and face

- A Survey on Anti-Spoofing Methods for Facial Recognition with RGB Cameras of Generic Consumer Devices

- John Dillinger- Fingerprint Obliteration

- Bandit Who Wore Old Man Mask Charged in Orland Bank Heist: FBI

- The man in the latex mask: BLACK serial armed robber disguised himself as a WHITE man to rob betting shops

- Doctor convicted of surgery to alter immigrant fingerprints

- Alvin Karpis demonstrating his fingers with surgically removed fingerprints

- SiW: Spoofing in the Wild Database

- High-Quality Wide Multi-Channel Attack (HQ-WMCA)

- Wide Multi Channel Presentation Attack (WMCA)

- Examples of fingerprint alteration

- Examples of web-collected images before and after the use of makeup to change facial shape

- Example of impersonation through extreme artistic-level makeup

- 'Dazzle' makeup won't trick facial recognition. Here’s what experts say will

- Can this makeup fool facial recognition?

- Overly bright photo flash is considered a facial occlusion

- Plastic Surgeon and Crime

- Drug cartel boss used facial plastic surgery to avoid police for 30 years before being arrested in Brazil

- Deep Models and Shortwave Infrared Information to Detect Face Presentation Attacks

- Deep Pixel-wise Binary Supervision for Face Presentation Attack Detection

- Evasion And Obfuscation In Speaker Recognition Surveillance And Forensics

- Delaunay Triangulation

- Gender Obfuscation through Face Morphing

- The Federalist Papers by Wikipedia

Antispoofing

Antispoofing