Problem Overview

Facial recognition is one of the earliest techniques used to identify a person using Artificial Intelligence (AI). The first instance can be dated back to 1964 when Woodrow Bledsoe conducted a series of experiments using a primitive scanner to capture a human face and a computer to identify it.

The first attempt to put facial recognition to practical use was made in the early 2000s in testing carried out by the National Institute of Standards and Technology (NIST), a U.S. government organization. The series of tests, called the Face Recognition Vendor Tests, used a technology called FERET — Face Recognition Technology. The goal was to eventually help the government employ this technology for practical applications.

Since then, facial recognition has become commonplace in many applications, often in the name of security. Many modern banking apps have employed the technology as a login feature, and many devices such as smartphones and laptops allow face recognition unlocking. It’s also used in border checks, social media, video games, controlled gambling, education, sporting events, and more.

However, as facial recognition technologies have advanced over the years, so have the tactics and methods used by impostors to cheat/hack the recognition systems. These hacking methods range from rudimentary to complex attack types: from employing simple 2D photos to constructing elaborate 3D silicone masks, which can sometimes even "emulate" the warmth produced by a live human face.

Terminology

Let’s define some key terms that you will need to know as you read on about these types of attacks. Antispoofing terminology is chiefly stated by the International Organization for Standardization (ISO).

Commonly used terms include:

- Spoofing attack: An attempt by an impostor to be successfully identified by a recognition system as someone else. The attack is carried out for various, most often illegal purposes.

- Antispoofing: A set of countermeasures designed to mitigate or prevent spoofing attacks.

- Presentation Attack (PA): An attack during which a biometric presentation — a target's photo, deepfake video, mask, etc. — is presented to the recognition system, while often emulating face liveness.

- Indirect attack: A method which exploits a technical weakness of the recognition system, such as flawed coding, in order to hack it.

- Presentation Attack Detection (PAD): A technology which scans biometric signals of a presented object to detect whether it is a legitimate person or their imitation.

- Presentation attack instrument: An item used for presenting an attack, such as a mask, target's picture, pre-recorded video or fake fingerprints, among other things.

- Half-Total Error Rate (HTER): A parameter used to assess the performance quality of a recognition system. HTER is calculated with the help of False Rejection Rate and False Acceptance Rate values, using the formula: HTER = (FAR+FRR) / 2.

Liveness detection and antispoofing are relatively new technologies; therefore, their terminology and standards are still in development.

Types of Attacks

There are two types of facial recognition attacks: Presentation Attacks and Injection Attacks.

Presentation attacks

Presentation Attacks (PAs) include manipulating or falsifying the existing biometric features and then presenting them physically to a biometric system's sensor — in this case, a camera. However, not every PA is adversarial in its nature. For example, a face recognition system can execute a false rejection if a subject has undergone plastic surgery, applied cosmetics, etc.

Malicious presentation attacks aim to fool the facial recognition technology for nefarious purposes. They can be separated into:

- Impostor Attacks, which focus on impersonating the target's facial parameters or completely synthesizing them from scratch.

- Concealment Attacks, which are utilized to camouflage the attacker's true identity to avoid identification.

Facial PAs encompass a range of techniques that vary in quality and creativity. Impostors are now able to use advanced technologies to bypass facial recognition. We have compiled them in this table, ranging from crude to complex:

| Printed Photo

The simplest type of facial spoofing attack involves presenting a printed photo of a targeted person to a facial recognition system. However, due to its primitivity, the method is of a low threat level. |

|

| Display Attack

In a display attack, the screen of a mobile gadget is used to reproduce an image or video of the target and present it to the camera of a system. |

|

| 2D mask

Fraudsters also use printed 2D masks, at times with holes in them, which imitate a human face. In this attack, facial proportions and size of the image are meticulously reproduced to create a realistic facial image. |

|

| 3D mask

A more sophisticated method involves sculpting a realistic 3D mask which mimics the face of the target. In 2020, a Vietnamese security company Bkav demonstrated the successful unlocking of an iPhone X, using a 3D printed mask. |

|

| Silicone mask

Another form of attack uses masks made of silicone. Such masks with enhanced elasticity can achieve a highly believable image of a target. |

|

| Deepfake

Deepfakes, generated with the help of AI, produce artfully doctored videos. Deepfakes can "attach" a target's face to another body, generate a video clip using a static image, or even mimic a voice. |

|

| Pose/expression variation

In order to further conceal their identity and evade detection, an attacker can depart from a neutral facial expression or change their head pose. |

PAs belong to the analog domain, meaning they happen outside the solution's operational system and memory, even though they are attacking the system's algorithms.

Injection attacks

As opposed to PAs, injection attacks happen on the digital domain; they are executed to intercept and tamper bona fide biometric data with a forgery inside the system. They are presented in software and hardware-based types.

The software type implies that a target's gadget can be “infested” with a malicious application that grants access to its memory, internal communication channels, biometric template, etc. The attacker can modify the genuine data by manipulating the feature extraction module, etc.

The hardware type requires a module to convert the HDMI stream to MIPI CSI and an LCD controller board. This contraption can replace the genuine video stream with a fake one coming from another device. The hardware-based approach is more sinister, as it offers lower latency and removes other cues that can expose interference.

Facial Spoofing Prevention

Modern biometric-based security systems offer a range of solutions to tackle facial spoofing.

Hardware-based solutions

Active flash is one of the most effective countermeasures against facial spoofing. This involves analyzing how light reflects from an object. The system can conclude if it lacks the necessary shape, depth and detail of a real human face. This method analyzes the difference between a real face and a mask caused by a discrepancy at higher frequencies which can be detected by the system.

Infrared sensors equipped on a camera are another potential antispoofing method. They can gather thermal data of the target to analyze its emanation and distribution patterns, which are incredibly hard to falsify.

Pupil dilation can be used as another measure to detect PAs. Pupils tend to dilate in reaction to changes in lighting. An intentional but safe (nontraumatic) increase or decrease of light by the detection system will make pupils react, which can be detected by a 2D camera together with blinking.

3D cameras are also a promising solution; they are able to capture a stereo image due to their "binocular vision", and can also assess if the object presented has enough depth for a real human face and whether its size is adequate for the target.

LiDAR — light detection and ranging technology can also be used to measure distance and depth to assess whether a presented target is fake. LIDAR technology is implemented in the iPhone 12 in order to improve the security of its facial unlocking features.

Software-based solutions

Software-based (SW) solutions utilize existing camera systems to detect liveness. Regular cameras provide only RGB texture information and do not support infrared, depth map, or other 3D features. Therefore, software-based solutions involve complicated post-processing techniques to provide an accurate liveness decision.

There are two widely used SW-based methods: active liveness and passive liveness.

Active liveness methods involve challenge-and-response techniques where a person is asked to perform a task, such as smile, blink repeatedly, turn their head, or close their eyes. By analyzing the movement, the system can identify whether it is a real person or not. Some active liveness methods reconstruct a 3D map using footage of a target recorded from different angles and distances.

Passive liveness includes mostly software-based techniques that function in the background, while being unintrusive. They are usually divided in two groups:

- Passive techniques detect liveness using data from the device sensors. In this case, the detection software requires access to the device sensors to control parameters such as the color/light of its display to analyze changes in color spectrum of the face. Another example is controlling camera focus to do a reconstruction of a 3D face map.

- Fully passive techniques are those in which both the user experience and software integration are passive. These methods are based on texture analysis or optical flow analysis of a single RGB image, set of images or a recorded video.

Texture-based analysis is an effective and widely-used method of passive detection. This method illuminates the target image/video and examines the skin texture by analyzing its reflecting properties. Since human skin and its fake analogues — especially printed photos — have different surface properties and texture patterns, differentiating a false image from an actual face with the texture-based analysis is relatively easy.

Deep convolutional neural networks (CNN) are also effective at passive antispoofing. CNNs are specifically designed to examine visual images. Neural networks work by learning already known attack patterns, especially, in case of temporal and spatial values, from which aligned feature maps are made. Therefore, most CNN approaches are based on these principles.

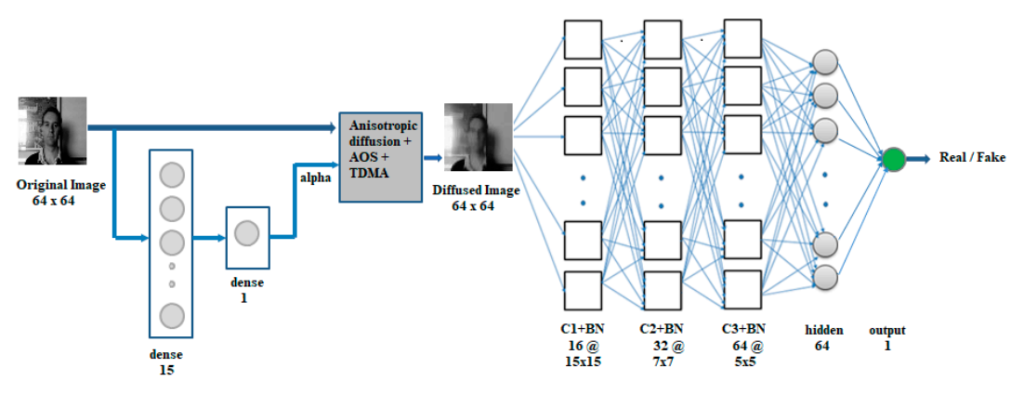

Another proposed passive tactic uses integration of anisotropic diffusion. This principle states that light diffuses slower when reflected from a 2D surface compared to a 3D surface. A 3D object — like a human face — allows faster anisotropic diffusion due to its nonuniform surface, which is detected by a Specialized Convolutional Neural Network (SCNN). Its architecture can be applied to video sequences to prevent Replay Attacks.

FAQ

What is image spoofing?

Image spoofing is a presentation attack type which uses a 2D image of a targeted person.

Image spoofing is the most commonly used type of spoofing attack. In this attack, malicious actors print a 2D photo of the target and then present it to the sensors of a recognition system. (Hence called a “presentation attack”). This attack is popular as it is cheap, quick, and easy to execute.

The ease and accessibility of image spoofing also makes it easily detectable. Therefore, it is not successful against most advanced recognition systems. However, it can still be successful if used against relatively inexpensive smartphones, which may not be equipped with the latest security tools. Cheaper or older phones do not have sophisticated equipment to differentiate a 2D object (presented image) from a 3D one (live face).

What is CNN (Convolutional Neural Network)?

Convolutional neural network is a type of an ANN extensively used in biometric security.

Convolutional Neural Networks (CNNs) are a subtype of Artificial Neural Networks (ANNs). Their name comes from the specific architecture, which involves three elements:

- Convolutional layer: where main computation occurs. It includes a feature map, filter and input data.

- Pooling layer: responsible for dimensionality reduction and applies the aggregation function to the receptive field values. There are two types of pooling: max and average.

- Fully connected layer: leverages a softmax activation function to provide correct input classification.

CNNs show highly accurate results when used in image, video recognition or voice liveness and facial liveness. They are therefore widely employed in antispoofing.

What is 3D face recognition?

3D face recognition is considered the most accurate method in facial recognition technology.

3D recognition is a technique employed as part of facial antispoofing measures. It involves a stereoscopic 3D camera that is capable of range imaging. This feature is critical in analyzing the reflection of light from a presented face.

As a result, the system can detect the geometry of rigid facial features; if an object presented as a human face lacks the depth, shape, and anatomical detail of a real face, the system will reject it as a fake. An alternative 3D face recognition technique works by using non-specialized cameras. This approach requires a user to perform head movements, so that their image can be captured from different angles.

What is 2D face recognition?

2D face recognition is a relatively simple method of identifying a human face.

2D face recognition is less complicated compared to 3D recognition. It does not involve sophisticated equipment and techniques such as 3D stereoscopic and infrared cameras, flood illuminators, sensors, or in-depth facial maps.

2D facial recognition simply compares a stored image of a user with the face presented to its cameras. This technique is especially popular as part of the “Phone Unlock” feature in less expensive phone models. Although it is simpler, cheaper, and more accessible, 2D face recognition is also more vulnerable to threats. It can fail to detect a presentation attack in the form of a printed photo. Therefore, liveness detection modules are commonly used in conjunction with 2D face recognition for more accurate results.

Is 3D face recognition better than 2D?

3D face recognition is widely considered to be superior to 2D in terms of accuracy. 2D face recognition is better from the hardware requirements point of view.

Face recognition systems that use tools designed for identifying a 3D object show higher accuracy. A 3D camera is a popular equipment type used for 3D face recognition. Using stereoscopic vision, a 3D camera can tell if a presented face lacks the depth and volume intrinsic to a live human face/head. Infrared sensors are another vital component used in 3D face recognition. They detect the warmth emanated by the target’s face and can therefore differentiate between a lifeless photo/mask and a real face.

A 3D recognition system also analyzes light distribution on a facial surface as well as frequency spectrum. As a result, the 3D facial recognition system is extremely hard to trick and, therefore, usually combines face recognition and 3D liveness detection.

That being said, 2D face recognition doesn't require any specific hardware, and in conjunction with 2D liveness detection can be used on a broader range of different devices.

How does liveness work with face biometrics?

Liveness detection uses data provided by facial biometrics to prevent presentation attacks.

Biometric recognition systems use liveness detection techniques to identify whether a presented person is real. Based on this, a security system can accept or reject authorization.

Facial recognition relies on multiple features and behaviors of the human face, including pupil dilation, skin texture and temperature analysis, face coloring detection, lip movement, breathing patterns, blinking, and more. Using this data, liveness detection employs techniques such as optical flow analysis, residual neural network, Fourier spectral analysis, stereoscopic cameras, etc. Based on the results, a system can determine whether the face is real.

References

- Facial recognition system, Wikipedia

- Woody Bledsoe, Wikipedia

- Face Recognition Technology (FERET), NIST

- Where is facial recognition used? Thales

- Hackers just broke the iPhone X's Face ID using a 3D-printed mask, Wired

- Information technology — Biometric presentation attack detection — Part 3: Testing and reporting

- A Survey in Presentation Attack and Presentation Attack Detection

- ID.me gathers lots of data besides face scans, including locations. Scammers still have found a way around it

- What are deepfakes? TechTalks

- Presentation Attack Detection — ISO/IEC 30107

- The man in the latex mask: BLACK serial armed robber disguised himself as a WHITE man to rob betting shops

- Analogue domain

- TC358779XBG Peripheral Datasheet PDF

- An Overview Of Face Liveness Detection

- Stereo Camera, Wikipedia

- Lidar is one of the iPhone and iPad's coolest tricks. Here's what else it can do, Cnet

- Face Spoof Attack Recognition Using Discriminative Image Patches

- Enhanced Deep Learning Architectures for Face Liveness Detection for Static and Video Sequences

- Deepfake de Tom Cruise: pas pour le premier venu

- Máscara De Homem Velho Látex Realista De Halloween

- Face recognition - presentation attack detection

- Enhanced Deep Learning Architectures for Face Liveness Detection for Static and Video Sequences

Antispoofing

Antispoofing