Problem Overview

Occlusion of a certain anatomic trait is a recurring problem in biometrics. In 2020, when mask-wearing became more widespread, facial recognition faced a crisis when phone unlock features were unable to recognize owners’ faces — and when authorities had more trouble identifying suspects on CCTV footage.

Facial occlusion’s effect on facial recognition was in the news even before COVID — The year prior, during the 2019 Hong Kong demonstrations, citizens realized they could thwart facial recognition technologies used to identify and arrest protestors by obscuring their feature with masks and face paint. A somewhat similar tactic — a facial makeup called “CV Dazzle” — was employed a year later by the American social activists. According to its author, it’s based on the idea of reverse engineering against a facial recognition system. However, the experts deny its efficacy versus today’s solutions.

Medical and others masks were avidly used during Hong Kong protests to bypass facial recognition

However, this issue has been addressed since at least 2009 by Hazım Kemal Ekenel and Rainer Stiefelhagen, who explored lower performance of facial recognition (FR) with occluded face images.

Occlusions are basically observed in every biometric domain. For example, a higher cholesterol level can disrupt vein recognition. Loud noises can cause issues with voice recognition, earphones or headphones can affect ear shape recognition, or a bandage could affect fingerprint readings.

However, the area of biometrics most sensitive to disruptions remains facial recognition. Everything from eyeglasses to scarfs to makeup can sabotage a facial recognition system, whether accidental or for malicious purposes.

To both tackle potentially criminal behavior and decrease customer friction that can arise from facial occlusion, a number of solutions and techniques under the moniker OFR — Occluded Facial Recognition — are being tested. Many of these solutions are based on passive liveness detection.

Influence of Occlusion on Face Recognition

The Ekenel-Stiefelhagen 2009 research began with a number of experiments. They demonstrated that FR accuracy varies with occluded facial images depending on:

- FR model in use (including whether it's passive or active liveness detection).

- Location of the occluded facial area.

- Facial feature misalignment.

- Texture of an occlusion.

Authors conducted an experiment with the AR database, in which subjects are wearing sunglasses and scarves. The results showed that upper face occlusions — especially in the eye region — cause a significant dropdown in facial recognition, from 92.7% to 37.3%. Meanwhile, the lower face occlusion — mouth and chin — show a less dramatic accuracy decline: 91.8% to 83.6%.

Authors assume that this phenomenon can be explained by misalignment sensitivity that common FR approaches, such as elastic bunch graph matching,l or Fisherfaces, are susceptible to. A face alignment algorithm could be a possible remedy: it minimizes the closest distance at the classification step, while also providing rough estimation of the facial features positioning. Interestingly, authors also pointed out that an occlusion’s surface texture may affect recognition accuracy.

Types of Face Occlusion

As observed by Antispoofing.org, there are two general types of occlusion: Real and Synthetic. Natural occlusions, such as eyelashes, facial hair, or skin conditions, are sometimes mentioned as an independent type as well.

A more detailed classification includes:

Real type

- Systematic. Facial hair, pigmentation, makeup, and others.

- Temporary. Pose variations, hand covering, telephones, blood or bruises, cups, cosmetic masks.

- Special case. Blurred facial image, low resolution, overly bright/extremely low illumination.

- Mixed. Involves two or more types of real occlusion.

Synthetic type

This category contains synthetic, or digitally produced, occlusions. These can be used to simulate real occlusions. Various filters used on social media, such as mustache filters or headwear filters, can also be counted among synthetic occlusions.

Approaches to Recognizing Occluded Faces

As of now, there are three primary method categories of solving the issue of facial recognition when there are occlusions present.

Occlusion Robust Feature Extraction (ORFE)

This group is based on the concept of extracting facial features less affected with the occlusions. At the same time, the discriminative capability should stay preserved. These methods are separated into two groups:

- Engineered features. They allow for extracting features from the precisely outlined facial regions without a learning stage.

- Learning-based features. Here, feature extraction is performed with learning-based methods: sparse representation, nonlinear deep learning techniques, etc.

ORFE comprises such techniques as patch-based engineered features, Local Binary Patterns (LBP), Histogram of Oriented Gradients (HOG) descriptors, and so on.

Occlusion Aware Face Recognition (OAFR)

In this group, FR uses only the visible parts of the face, while the occluded area is ignored. It incorporates two groups of techniques. The first, Occlusion Detection Based Face Recognition, initially detects the occluded area and then withdraws necessary data from the non-occluded parts. It uses a 1-NN (nearest neighbor) threshold classifier, selective local non-negative matrix factorization, among other techniques.

The second, Partial Face Recognition, uses the partially visible face areas. It utilizes feature extraction with Multiscale Double Supervision Convolutional Neural Network (MDSCNN), Radial Basis Function components, multi-keypoint descriptors, and so on.

Occlusion Recovery-Based Face Recognition (ORBFR)

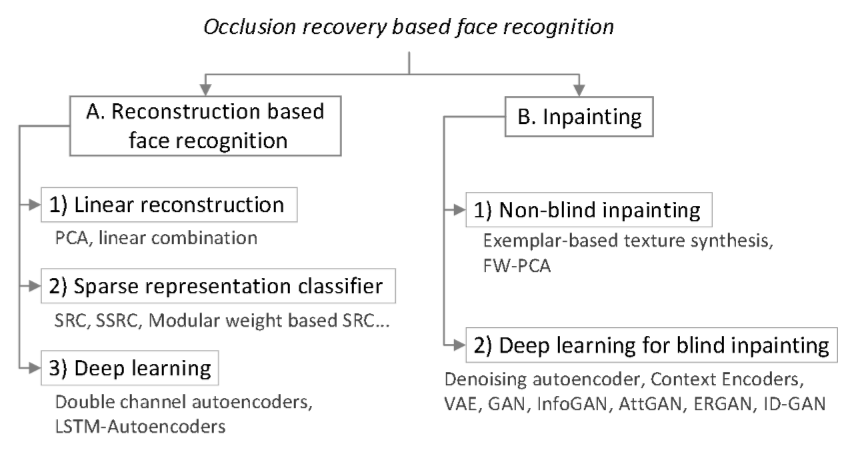

ORBFR is based on recreating the missing facial parts. It includes Reconstruction and Inpainting (fixing the image) approaches. They employ Structured Sparse Representation-Based Classification (SSRC), Long Short-Term Memory (LSTM), Weighted Principal Component Analysis (FW-PCA), etc.

Methods to Solve Partial Occlusion

A set of techniques can be used to solve the issue in accordance with the liveness certification.

Part Based Methods

Part based methods imply that an image can be segregated into a group of overlapping and non-overlapping elements which undergo the recognition process. An array of methods can be used in this context:

- Non-metric partial similarity. While two images are compared, differences between them are removed. This is done to retrieve intra-personal details. It employs a Self-Organizing Map (SOM), various distance measures, etc.

- 2D-PCA. Two-dimensional principal component analysis explores the image rows, which in turn, correspond to the rows in the feature matrix.

- SVM classification. The partial (e.g. modified) Support Vector Machine is used for reconstructing the missing parts.

- LGBP. Local Gabor Binary Pattern is coupled with Kullback-Leibler Divergence (KLD) to estimate probability of occluded and non-occluded parts of a face, multiscale image conversion, histogram calculation for every local component, etc.

Other face liveness detection techniques are based on subspace learning, Selective Local Non-Negative Matrix Factorization (S-LNMF), Posterior Union Model (PUM), and so forth.

Fractal Based Methods

A noteworthy example is the Partitioned Iterated Function System (PIFS). PIFS on its own can be hindered with a distortion caused by occlusions, so a face should be divided into separate regions: nose, mouth, eyes, etc. Then, an ad hoc distance measure is used to remove any occurring distortions and PIFS can focus on searching for correspondences that small square regions may share.

Feature Based Methods

This set of methods concentrates on individual features which may be unique from person to person, such as the area encircling the eyes, while ignoring other features. They rely on isolated Eigenspace analysis, soft masking for outlier classification, guided label learning, SVM + Gaussian summation kernel tandem, etc.

Periocular and Masked Face Recognition

The Periocular approach focuses on specific regions. For example, for Near-infrared images, eyelids, tear ducts, and eye shape play a huge role; for VW images, skin and blood vessels are of importance. This method extracts a) Global features pertaining to face or its region b) Local features formed by a group of discrete points. Plus, it analyzes color, texture, and shape features.

Deep Learning in Partial Face Recognition

A promising method based on deep learning is called dynamic Feature Matching. It relies on the Fully Convolutional Network (FCN), which comprises convolutional and pooling layers. Another vital component is Sparse Representation Classification (SRC) applied to achieve alignment-free dynamic feature matching. The FCN itself is optimized with the sliding loss — it reduces face patch and face image intra-variation.

Classifying Facial Races with Partial Occlusions

A specific CNN model that features 10 layers and utilizes receptive field and weight sharing is proposed for race detection. It was trained with 96,000 sample images, which proportionately represent four human races: Caucasian, Mongolian, Indian, and African. It’s reported to demonstrate 95.1% accuracy at identifying a race shown in images with facial occlusions and pose variations. Here you can learn also about the impact of aging on facial recognition.

References

- Face masks give facial recognition software an identity crisis

- Why Is Facial Occlusion a Challenging Problem?

- Efficient Detection of Occlusion prior to Robust Face Recognition

- How to Identify Customer Friction

- Example of the noise louder than 10 dB playing a role of the voice occlusion

- AR Face Database

- A robust face recognition algorithm for real-world applications

- Image samples from the AR face database

- Real examples with different types of facial occlusion

- Stage microphone is an example of a temporary facial occlusion

- Synthetic occlusions imitating real-life items

- A survey of face recognition techniques under occlusion

- HOG (Histogram of Oriented Gradients): An Overview

- Facial Inpainting

- A set of techniques

- FARO: FAce Recognition Against Occlusions and Expression Variations

- A Survey on Periocular Biometrics Research

- Dynamic Feature Learning for Partial Face Recognition

- FCN's architecture

- On Classifying Facial Races with Partial Occlusions and Pose Variations

Antispoofing

Antispoofing