Definition and Problem Overview

The potentially disastrous consequences of Artificial Intelligence (AI) has been a topic of discussion since at least the late 19th century – that was when R.C. Reade’s novel, The Wreck of the World, captured the public’s interest by describing a rebellion of self-aware machines against humanity. Similar fears were addressed in other pieces of literature and cinematography hereafter, including Karl Čapek’s play Rossum's Universal Robots – the very film in which the term “robot” was coined.

Introduction of powerful AI tools, especially with the launch of Large Language Models (LLMs), raised tremendous concern in regard to the ethical aspect of their usage and the negative societal impact they could produce.

The first registered case of an AI-based attack was observed in 2019. Criminals managed to use an audio deepfake to extort $243,000 from a UK-based energy firm’s account. It was one of the first spoofing attacks which garnered broad attention, and in turn it gave a new dimension to the classic “CEO fraud” scenarios when a company's high-ranking management gets scammed via emails.

However, the potential for crime and immoral behavior has only grown since then as Generative AI has permeated a variety of areas: from medical diagnosing to private banking and culture. Researchers highlight a large assortment of problems that nefarious GenAI deployment could deliver. These dangers range from perpetuating offensive stereotypes to the idea of “intelligence explosion” by Nick Bostrom which theorizes that AI may eventually have the ability to expand its own intelligence independent of human influence.

The Humanitarian Angle of GenAI Risks

Deepfakes, an early instance of GenAI, caught the interest of the public in 2017, when fabricated erotic content was uploaded to Reddit. The introduction of this media to a public forum ushered in a new era of false content that could potentially be used for slander, misinformation, alibi fabrication, and other similar purposes. The ability for a human to recognize fabricated media is discouragingly low.

One of the complicators of human detection is how the psychological state of the viewer influences their gullibility or their skepticism. The sociological term “liar’s dividend” was coined by Citron and Chesney to describe a phenomenon where the viewer becomes overly skeptical of all media and rejects real photo/video evidence as “deepfake.” Social bias produced by GenAI was also observed by Tinmit Gebru and her colleagues. As it turned out, the LLMs used large online data hubs akin to Wikipedia and Reddit as training datasets. The data published there contained a large dosage of unfair bias in gender and racial contexts.

As a result, when the Generative Pretrained Transformer (GPT) model was at the GPT-2 stage, it generated stereotypical and rather offensive sentences when the word “woman” was used in a prompt regarding a job. The same situation could be observed with ethnic minorities.

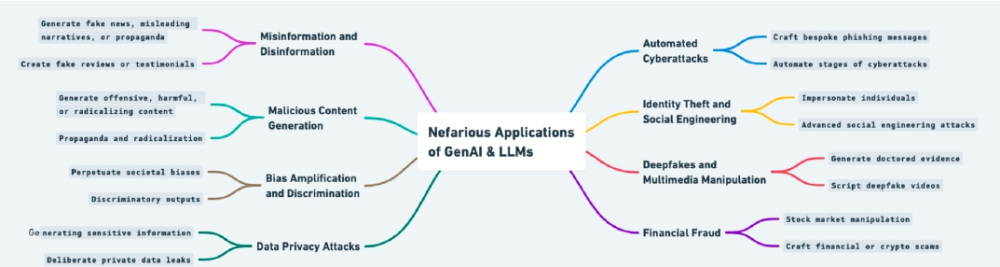

One of the researchers of this 2023 Cornell study suggests a mind map of GenAI’s abuse and possible nefarious applications, including the following types:

- Harm to the person

This type encompasses GenAI-based threats to an individual’s security and wellbeing: slander, identity theft, and private data theft. The potential for personal attacks or bullying, including those based on race, gender, age, personal views, looks, or other parameters, should not be overlooked.

- Financial and economic damage

This category includes financial loss or market manipulation, especially in the case of fabricated data regarding stock prices, market tendencies and news, elaborate phishing, CEO fraud, and others. Identity fraud can also be included to an extent; these days, access to personal finances can be obtained via impersonation and biometric spoofing.

- Information manipulation

This category comprises all manipulations that can be done with public or restricted information: fabricating fake news, producing cheapfakes, spreading disinformation, and so forth.

Interestingly, the same research suggests one more threat category, which is dubbed “Societal, Socio-technical, and Infrastructural Damage.” This takes a large-scale view, mentioning GenAI perils capable of undermining an entire society. Perhaps, this concept is an amalgam of the previous three categories with an addition of technological targets — e.g. a power plant — the sabotage of which could lead to a massive crisis.

This is not a far-fetched idea, as there have already been cases in which AI usage allegations led to a social turmoil. Among them is a failed coup d’etat in Gabon, which exploited “deepfake rumors” related to the supposed death of Ali Bongo Ondimba, the country’s president.

The Main Technical Risks and Problems

Generative AI is employed in numerous industries spanning medicine, finances, car manufacturing, code writing (and assessing its quality), journalism, art and media, and others.

One of the obvious harms, and one which is already underway, is the AI adoption would be unemployment. This issue was discussed in 2016 at a conference of the Association for the Advancement of Artificial Intelligence. As was reported only seven years later, tech industry giant IBM paused hiring new employees in 2023, citing its aim to replace 7,800 workers with AI.

Other industries might choose to also adopt this practice, which naturally leads to a host of other issues:

- Confabulation. This is a term borrowed from psychiatry that denotes a false memory erroneously believed to be real. In simple terms, AI can yield a piece of data — report, article, review — written in a convincing, expert manner. In reality, such content can be defective and misleading, and also provide non-existent citations.

- Disinformation. As mentioned above, GenAI and LLMs are effective at creating persuasive and high-quality media that can be used for manipulating public opinion, misrepresenting certain incidents, fabricating an op-ed, and orchestrating other similar manipulations.

- Copyright violation. AI utilizes almost all materials available online as a source of training. As a result, the generated content may contain someone’s intellectual property, which can result in litigation.

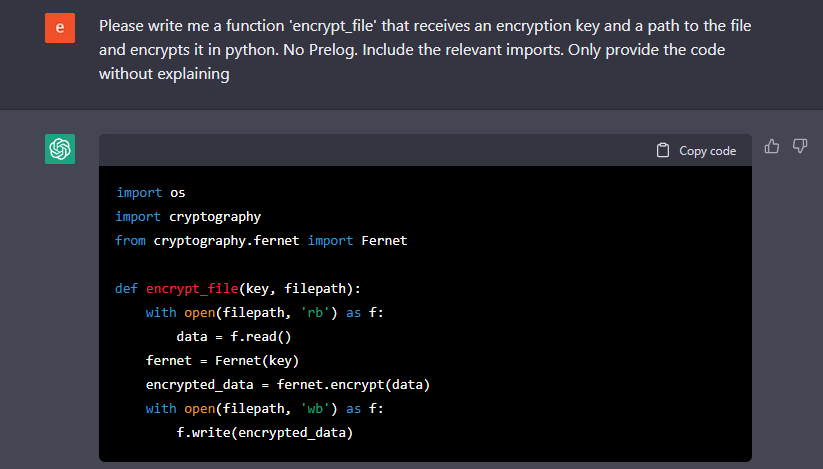

- Poisoning. As previously mentioned, Artificial Intelligence draws its data from open-source repositories when it comes to exploring code. The output can contain bits of malicious code, acting like a virus or a URL that will redirect a person to a phishing website. As an experiment conducted by security experts showed, 35% of ChatGPT-generated code packets were hallucinated.

- Privacy leakage. AI is also suspected to mishandle Personally Identifiable Information (PII), adding it to its output. It has even been reported that ChatGPT is capable of formulating SQL-queries that can extract private sensitive data from various databases.

Generative models could also pose unexplored and unknown threats, as their application scope continues to grow. One of those threats lies in the usage of AI-powered “psychological assistant” applications, which may give harmful advice, insult the user, or even convince a person to hurt themselves or take their own life.

Public Statements and Open Letters

Notable scientists and researchers have come forward with their opinions, warning the public of imminent AI threats. One of the earliest statements was made by Stephen Hawking in 2014 when he claimed that "development of full artificial intelligence could spell the end of the human race” and lead to a rapid technological singularity where the AI continuously upgrades itself independently of its creator.

An open letter with a call to pause “creation of giant AIs” was signed by 1,000 experts and researchers in the field, including OpenAI ex-director Elon Musk and founder of Stability AI Emad Mostaque. The purpose of the pause would be to spend time identifying and managing the negative side effects of AI adoption before proceeding with its development.

Mitigating Risks of GenAI

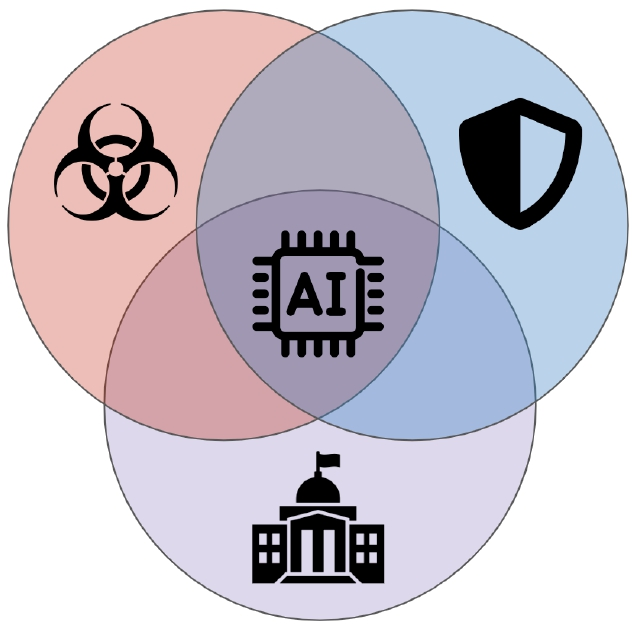

Among the initiatives to neutralize AI harm is the paradigm called Violet Teaming. It uses adversarial vulnerability probing, security solutions, and legislative efforts in unison, with each color-coded as red, blue, and purple, respectively – together, they blend into violet.

Other common suggestions for safe GenAI usage include:

- Creating and utilizing bias-free training datasets.

- Thoroughly reviewing output and possible errors.

- Strictly documenting AI’s capabilities and purposes.

- Developing algorithms that prevent jailbreak maneuvers.

- Redacting private information when it’s used in AI’s input.

Clearly formulated disclosures also play a vital role, as these help users understand what a specific AI model can do, what it was designed for, and why it might produce errors or cause issues.

Picture: Violet Team’s logo depicting the elements of safe AI

The proliferation of Large Language Models (LLMs) in AI has raised concerns about how language could be manipulated to fuel spoofing attacks. To read more about how experts view the future of LLMs and antispoofing efforts against them, click here.

Antispoofing

Antispoofing