Definition & Problem Overview

Remote identity proofing (RIDP) is a procedure of collecting and processing information regarding someone’s identity online. It is vital for digital onboarding, Know Your Client compliance, and other online services. RIDP greatly depends on analyzing biometric data: facial features, fingerprints and other liveness parameters.

At the same time, RIDP also suffers from a number of vulnerabilities. It faces the biggest threat from Presentation Attacks (PAs). A PA is a type of attack that features specific tools (Presentation Attack Instruments or PAIs) capable of imitating a target person — these tools are literally presented to the sensors of a verification system.

Presentation Attack Instruments (PAIs) include a wide variety of tools: silicone masks, printed cutouts, replayed videos, as well as digital deepfake manipulations. Therefore, it is essential to provide fast and highly accurate liveness detection for a RIDP system.

According to a report by the European Agency on Cybersecurity (ENISA), deepfakes and replay attacks made with the high-definition screens represent the biggest threat to the modern RIDP solutions.

According to a survey held by iProov company, 51.9% of the respondents are worried that deepfakes will be used for stealing their identity and setting up fraudulent credit cards in their name. At the same time, 81.3% of the respondents believe that biometrics will be used for identity proofing in the future.

Apart from ENISA, many other official bodies are also concerned with identity theft performed using fabricated media. In 2021, Department of Homeland Security, US, released a comprehensive report on existing deepfake and cheapfake technologies and their potential threats.

Attack Types Aimed at RIDP

ENISA’s report outlines 4 primary attack types that can compromise remote identity proofing.

Photo attacks

In liveness taxonomy, a photo attack is possibly the most primitive attack technique and implies that a photo of the target person — printed or shown on a high-resolution screen — is presented to the camera of a RIDP system. It is relatively easy to spot, as photos lack necessary parameters of a living person’s face: depth, natural shadows, skin texture, retina light reflection, etc.

Video replay attacks

Video replay attacks involve a video, featuring either an attacker’s or a target’s face which is replayed to the RPID system on a high-resolution screen. The video can be stolen, pre-recorded or synthesized using deepfake tools.

3D mask attacks

3D masks used for presentation attacks range in quality from cheap and easy to make cutouts to elaborate masks produced using 3D printing. However, both simple and elaborate masks are now becoming easier to obtain by fraudsters. As an example, a highly realistic mask made by Japanese company, Kamenya Omoto, costs less than $1,000.

Deepfake attacks

According to a report, the emergence of highly realistic deepfakes is following a nearly exponential growth, doubling roughly every 6 months. Considering that some deepfake tools are easy-to-obtain, it is hard to estimate how many synthetic videos exist at the moment.

Anatomy of a Deepfake Attack

Typically, all deepfake attacks follow the same scenario:

- Harvesting. In this phase, source images or videos of a target victim are collected. They are mostly harvested from publicly accessible platforms such as Instagram, Facebook, Telegram, YouTube, LinkedIn, and others.

- Training. The obtained visual samples (dataset) are "fed" to a specialized software. This can be a simple application like Reface or a sophisticated neural network that requires specific skills and knowledge to be operated. Upon "feeding" the dataset, these tools will learn fundamental traits of a target person’s appearance: facial features, expressions, mannerisms, and so on.

- Altering. The original images will be processed with various digital manipulation techniques: identity swapping, face morphing, attribute manipulation, etc.

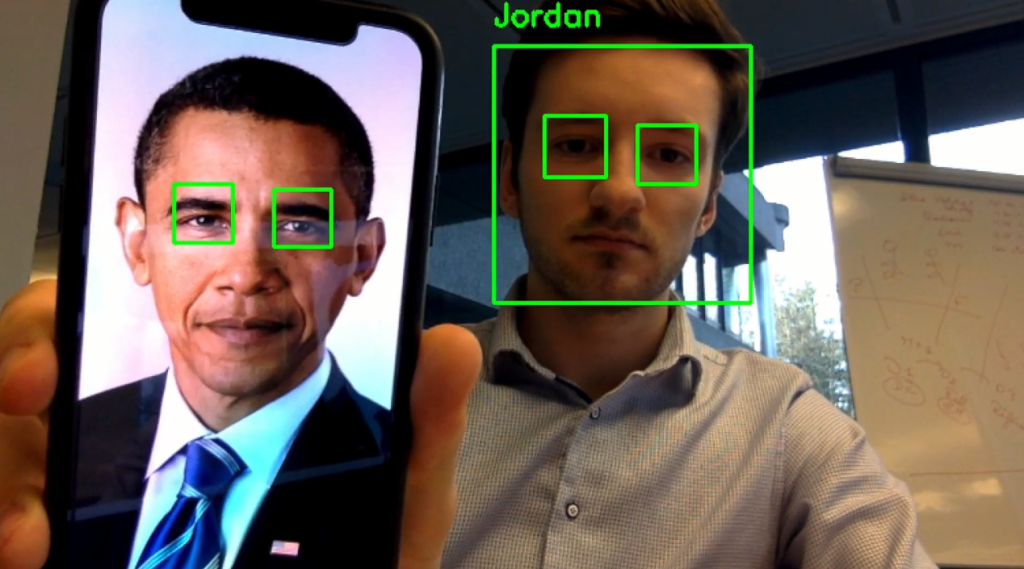

- Attack. Finally, fraudsters have two ways of producing an attack. The first one is a basic PA, when synthetic media is presented to an evidence-based proofing system. The second way involves inserting the fabricated video directly into the camera’s stream.

It is worth noting that while the second attack method requires extra effort — popularly referred to as "hacking" — it also gives the fraudsters a bigger leeway for action. For example, they can employ a puppeteering technique in real time — this will allow fooling a challenge-based proofing system that prompts a user to blink, nod or smile to get verified.

Face2Face — an application developed at Stanford in 2016 — is a good example of such a tool. Face2Face is based on a Recurrent Neural Network (RNN) and allows a "facial reenactment of a monocular video sequence". As a result, this tool can re-render a photorealistic output video, adding to it real-time face alterations: lip-synching, etc.

Despite their intimidating threat, video deepfakes are not the only relevant attack instrument. It appears, audio deepfakes are even more successful at achieving criminal goals. The first known case of an audio deepfake successfully bypassing a human operator took place in 2019 when £200,000 was ordered to be wired to a fraudulent bank account.

Just like a video deepfake, its audio counterpart requires a vast dataset of audio samples to be trained with a deep learning model. They can be obtained from recorded phone calls, voice messages or applications like Clubhouse, Twitter Spaces, and others that provide the "audiorooms".

Countermeasures

Biometric liveness detection is seen as the most effective remedy against deepfake attacks on remote identity proofing systems. Liveness detection is usually categorized into two types:

Active approach

Active approach techniques are used in challenge-based systems which prompt an applicant to perform a random action like following a dot on the screen with their eyes, waving a hand rapidly, turning their head, nodding, blinking etc. As noted in a report by ENISA, rapid movements help in revealing a deepfake; the software producing them cannot catch up with quick flickering. This results in blurring, warping, distortion, and other unnatural artifacts.

Overlapping is another nuance that deepfake tools struggle with. Therefore, it is recommended to prompt an applicant to "place a hand or an object in front of the face", as it will result in heavy visible distortion in case of a puppeteered deepfake.

However, active liveness detection has its own disadvantages that include increased customer friction, suspicion and misunderstanding on the user’s side, as well as providing fraudsters with insight to its internal working.

Passive approach

Passive detection is attested by many experts as a more favorable solution of liveness detection, and it's applied universally: from anti-spoofing for IoT to public security. It provides a vast range of detection techniques that occur without a user noticing them. Passive detection mostly focuses on major life indicators such as blinking, light reflection properties intrinsic to the human eye or skin etc.

For example, it is possible to detect an impersonation by analyzing the wavelength of the light reflected off the eye retina — the effect is known as the "bright pupil feature". Another method helps to measure the facial depth by analyzing its low spectrum frequencies — something that a deepfake or mask would not have. Both techniques can be assisted with the simple smartphone flash lighting.

Heart rate estimation is also a promising passive antispoofing method. It detects subtle color variations in the human face caused by oxygen saturation, heart activity and blood circulation. Current Deepfakes commonly lack these intrinsic human characteristics.

Liveness Detection Criticism & Safe Ecosystem

Research by Ann-Kathrin Freiberg of BioID mentions that biometric detection is not always successful with detecting deepfakes. According to the research, the ISO/IEC 30107-3 biometric standard does not really specify deepfake attacks. It claims that fake videos injected into the video flow — an application level attack — poses a far more serious threat than a regular manipulated media.

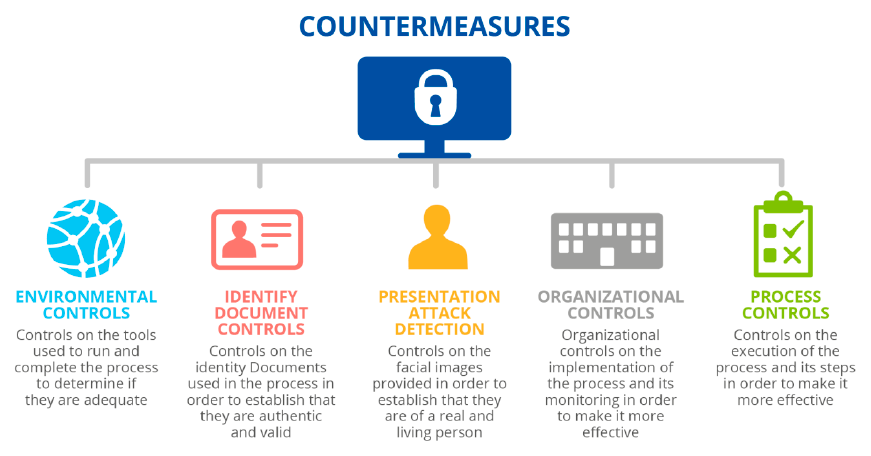

ENISA’s report points to the urgency of creating a global ecosystem that will guarantee safe and secure remote identity proofing. It includes 5 key elements: Environment controls, Identity document controls, Presentation attack detection, Organizational controls and Process Controls. Together they can mitigate deepfake usage and document forgery.

References

- Remote Identity Proofing - Attacks & Countermeasures

- The Threat of Deepfakes

- Increasing Threats of Deepfake Identities

- Japanese Company Now Offers Ultra Realistic 3D-Printed Masks of Human Faces

- Realistic 3D mask produced by Kamenya Omoto

- Report: number of expert-crafted video deepfakes double every six months

- Face2Face: Real-time Face Capture and Reenactment of RGB Videos

- FaceRig app employed a similar puppet technique as Face2Face

- Listen carefully: The growing threat of audio deepfake scams

- Exploiting Visual Artifacts to Expose Deepfakes and Face Manipulations

- Insight on face liveness detection: Asystematic literature review

- Deepfakes can be detected by analyzing light reflections in eyes, scientists say

- An overview of face liveness detection

- Example of the blinking analysis

- DeepFakes Detection Based on Heart Rate Estimation: Single- and Multi-frame

Antispoofing

Antispoofing