General Overview

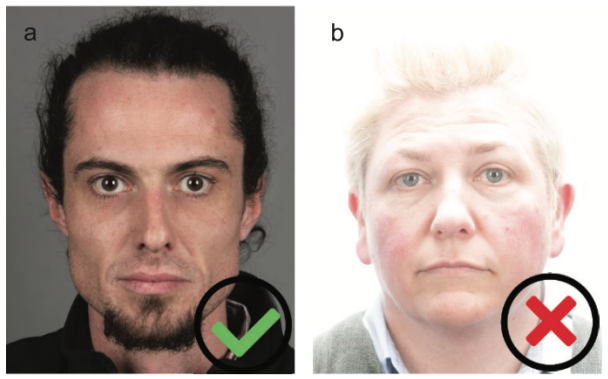

In face anti spoofing, makeup presentation attack (M-PA) is a type of presentation attack, in which perpetrators use non-permanent facial alterations to bypass a security system based on facial recognition. The purpose of this attack is either to gain authorization into a system as a targeted person or conceal the perpetrator’s true identity. Makeup PAs often employ toupees, fake facial hair, eye lenses, and other artifacts that allow achieving the highest degree of appearance alteration possible. So, types, countermeasures and challenges of facial anti-spoofing should definitely scrutinize such an elusive threat.

Makeup PAs have a number of advantages, which make them more favorable than other PA attacks. For example, a number of liveness detection systems focus on the lack of ‘liveness cues’, which is a typical drawback of standard Presentation Attack Instruments (PAIs): masks, printed photos, face cutouts or replayed photos and videos. Makeup PAs allow the imposter to maintain these liveness cues while disguising their identity through alterations, allowing the attacker to easily bypass an active liveness security system.

At the same time, makeup PAs require a high level of skill, effort and time. Due to its temporary nature, such a PA has to be conducted as soon as possible, with all necessary preparations being made shortly before the attack. Insufficient skills or involvement of multiple makeup artists can result in disruption and failure of the attack attempt.

The Impact of Cosmetics on Facial Recognition

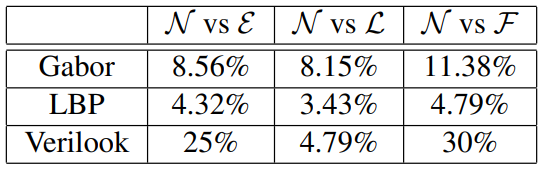

A series of experiments were held to assess the performance of facial recognition when presented with M-PAs. The first experiment featured a YouTube Makeup Dataset (YMD), which contains 99 subjects. Each subject in turn gave four images: two with makeup and two without. The results demonstrated that Equal Error Rate (ERR) for Makeup vs. No makeup scenario is comparatively high: 23.68%. This proved that commercial face recognition solutions struggle with detecting makeup. At the same time, academic solutions showed a somewhat better performance, possibly due to their tuning option.

Another experiment featured a Virtual Makeup Dataset (VMU) using 51 subjects with 3 post-makeup images for each person. Lipstick, eye makeup and full makeup were added to the images and then compared to the non-makeup originals. The results showed that lipstick application had the lowest distance score, while the full facial makeup made detection and matching significantly more challenging. Eye makeup also considerably reduced the matching capability.

Types of Makeup Presentation Attacks

In terms of biometric security, makeup types can be separated into: a) Physical and b) Digital.

Physical makeup

This type can include both cosmetics for everyday use and specialized substances used in movie and theater. Physical makeup ususally targets three primary areas:

- Skin. It is used for accentuating complexion, hiding skin blemishes and wrinkles, outlining facial contour, etc.

- Lip. Lipstick can add additional volume to the lips through visual contrast.

- Eye. Makeup for the periocular region can alter the eye shape, highlight brows/eyelashes, and so on.

It should be noted that regular makeup does not belong to the cohort of PAIs as it is used by millions of individuals for cosmetic, aesthetic or even traditional/ceremonial purposes. (For instance, in China male cosmetics are rooted in an ancient tradition of Chinese opera.)

Specialized makeup uses more substances such as theatrical blood. It is used to imitate scars, wounds, birthmarks, wrinkles, and so on. However, these materials are easy to obtain and include many common items such as corn syrup, food coloring, glycerin, etc.

Digital makeup

Digital face manipulations allow applying synthetic makeup via applications like MakeUp Plus, L'Oréal’s Makeup Genius, Modiface Photo Editor, and others. Moreover, with the use of a Generative Adversarial Network (GAN), it is possible to emulate a person's makeup style and digitally transfer it to another person’s face. Such tools can potentially be used for video injection attacks.

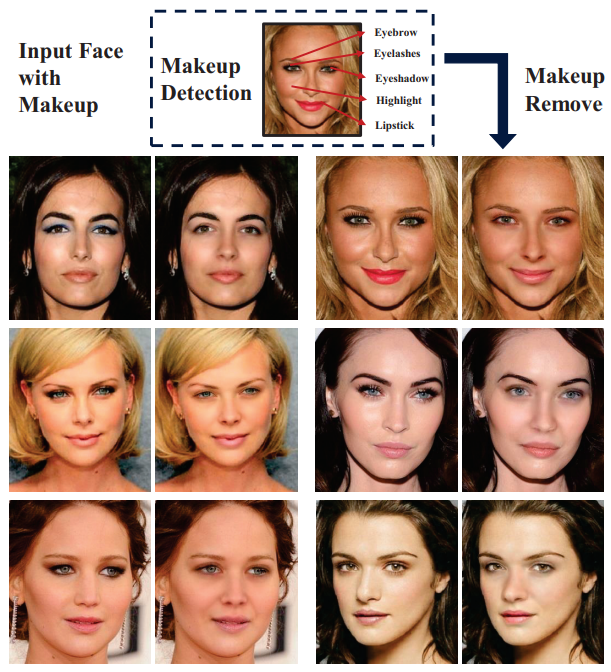

Makeup Detection

An algorithm proposed for makeup detection is based on locality-constrained low-rank dictionary learning. This method separates a subject's face into three regions: facial skin, lips and eyes. Next, a group of techniques is applied including pair-wise dictionaries learning of face regions before and after makeup, Poisson editing, facial landmark aligning, ratio-image learning to remove reconstruction artifacts, and so on.

In simple terms, this method removes the makeup from an image, usually obtained ‘in the wild’, and recreates the subject's original face. This is achieved by training the algorithm on a dataset that contains face images in pre-/post-makeup stages. In particular, such a method could be favorable for a passive liveness detection system.

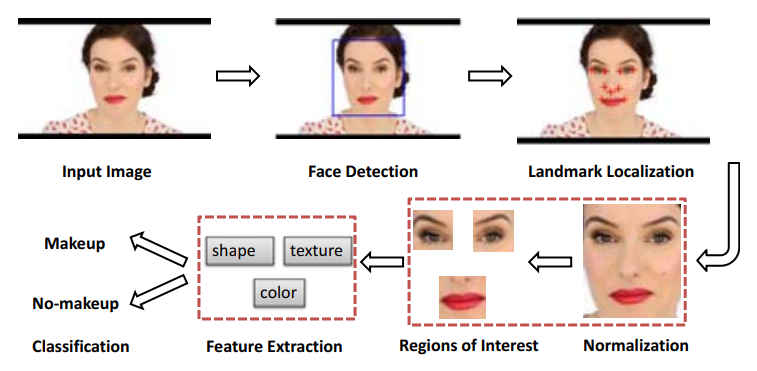

An alternative method explores the Hue/Saturation/Value (HSV) colorspace which helps to detect makeup information more effectively. The technique relies on the following elements:

- Face detection

- Landmark localization

- Face normalization

- ROI extraction

- Feature extraction

- Feature classification

Additionally, it employs components such as the AdaBoost face detector in OpenCV to spot facial location and scale in a photo, Gaussian Mixture Model (GMM) for defining joint probability distribution of facial landmark positions, Haar-like filters and an AdaBoost classifier for indicating landmark appearances, and so on.

Databases

There are very few examples of publicly available makeup datasets. Two well-known examples available for download are YouTube Makeup Dataset (YMD) and Virtual Makeup Dataset (VMD). EURECOM has its own database, which consists of images borrowed from various makeup tutorials. A unique aspect of EURECOM’s material is that it illustrates an entire makeup process through a series of increasing steps.

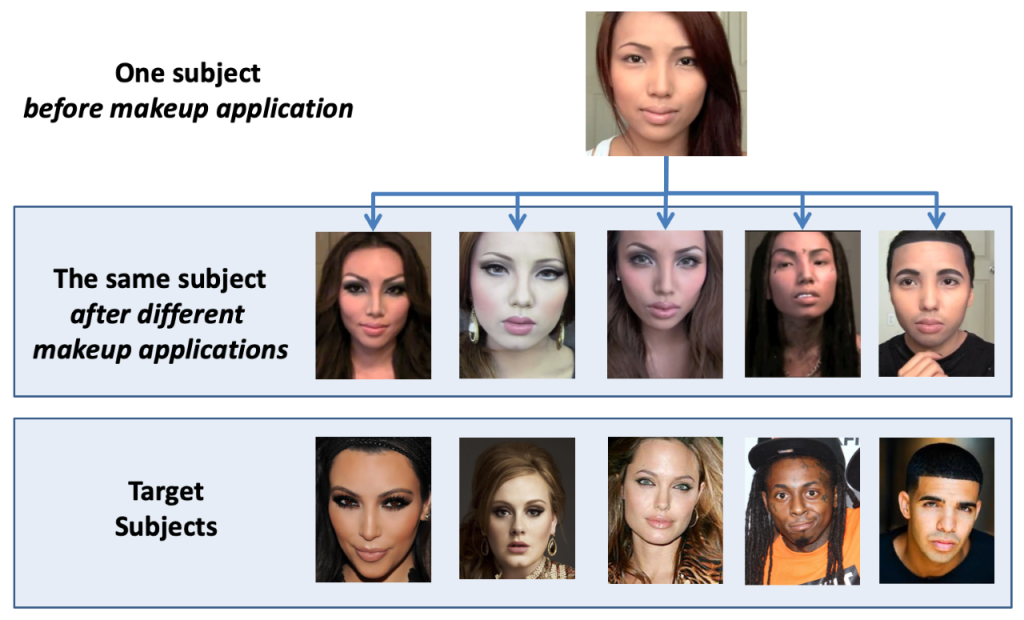

Age-Induced Makeup database or AIM includes both bona fide and Presentation Attack videos featuring makeup. Makeup In the Wild Database offers a variety of images captured in the wild with a large selection of makeup degrees, illumination levels, poses, visual quality levels, and so on. Makeup Induced Face Spoofing (MIFS) illustrates how a single person can impersonate numerous people through makeup.

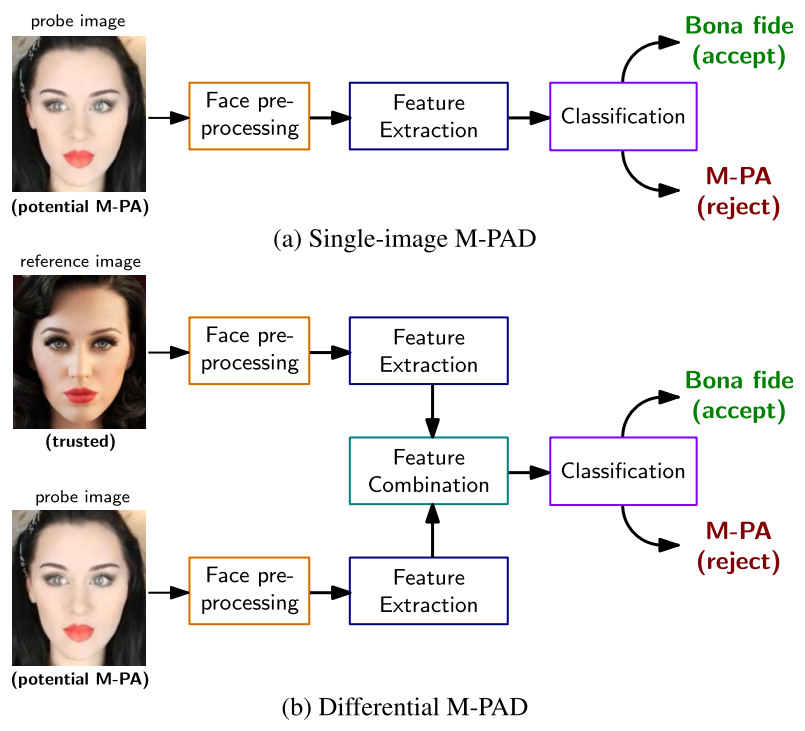

Detection of Makeup Presentation Attacks

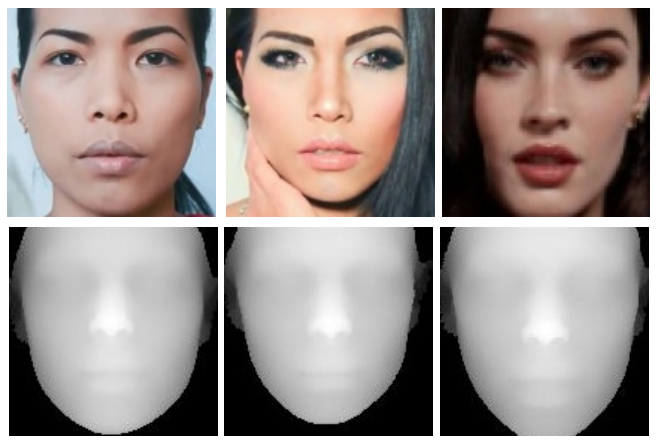

A Convolutional Neural Network (CNN) is capable of detecting M-PAs executed as part of identity concealment with a 93.88% accuracy. The method is based on the analysis of texture and shape cues. A multispectral camera with the short-wave infrared range (SWIR) of the electromagnetic spectrum is a promising solution, especially if coupled with a GAN that can remove makeup and recreate the original appearance of a person. However, this approach is costly due to the additional equipment used.

A Deep Tree Learning (DTL) method employs spoofing clusters to make a binary decision when analyzing an image. The method yielded a 50% for concealment and 10% accuracy for impersonation attacks in terms of Detection Equal Error Rate (D-EER). Another method explores RGB data to detect the facial depth with the 3D recreation tool. In case an M-PA is taking place, the system will detect it by comparing captured and recreated images.

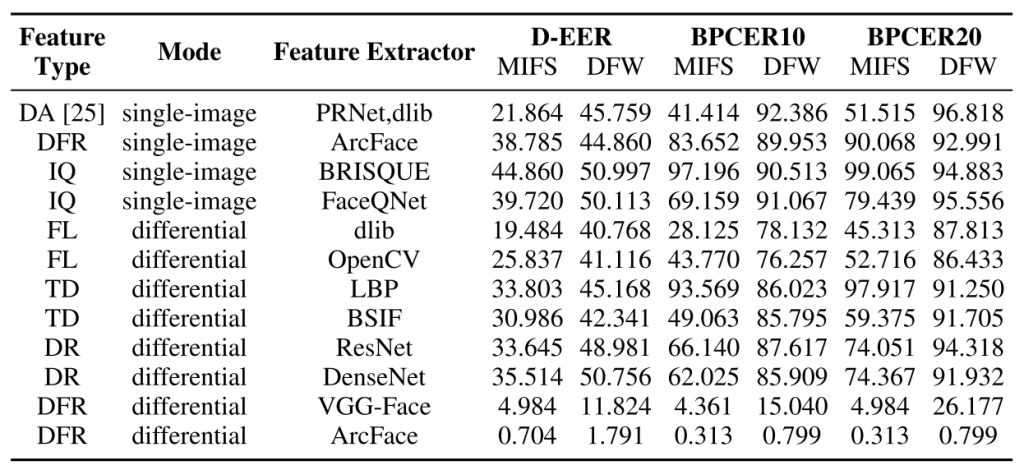

Comparative Analysis of Makeup Attack Detection Systems

The performance of M-PA detection methods is evaluated using metrics such as Bona Fide Presentation Classification Error Rate (BPCER), False Non-Match Rate (FNMR), False Match Rate (FMR), Impostor Attack Presentation Match Rate (IAPMR), Relative Impostor Attack Presentation Accept Rate (RIAPAR), etc. The table below shows performance of various deep learning solutions — PRNET.dlib, BRISQUE, ResNet, and others — in terms of the BPCER rate after testing them on MIFS and Disguised Faces in the Wild (DFW) datasets.

FAQ

What is a Presentation Attack?

A Presentation attack is a technique of deceiving a biometric system using a specific tool.

Presentation attacks (PAs) aim at bypassing an antispoofing system with the help of special instruments. (Also known as PAIs — Presentation Attack Instruments). These instruments include printed photos, silicone masks, gelatin fingerprints, voice samples, and deepfake videos etc. A presentation attack is basically an impersonation. It is performed by an imposter with the purpose of being authorized as a legitimate person and gaining access to a specific system. The potential targets of a presentation attack include credit cards, remotely controlled gadgets, datacenters, security systems, surveillance cameras, IoT based devices and systems, etc.

Makeup Presentation Attack definition

Makeup Presentation Attacks involve facial alterations performed with cosmetics.

In face liveness, Makeup Presentation Attacks (M-APs) employ makeup — theatrical or casual cosmetics — for changing someone’s appearance. Researchers suggest that not all M-APs are adversarial and can happen due to usage of cosmetics for aesthetic purposes. However, attack has a connotation too negative to describe benign cases.

Makeup spoofing is often separated into two types: a) Impostor type — with the help of movie-grade tools and professional skills a person can be disguised as someone else, even if the target is of opposite gender. b) Concealment type — culprit’s appearance can be altered to make them unrecognizable to facial recognition.

Is it possible to spoof a system with makeup?

It is supposed that makeup can help circumvent facial recognition.

Makeup presentation can potentially be used for bypassing facial recognition. An Israeli study proved that makeup patterns generated with a specific utility can help bypass facial recognition. The same idea was voiced in 2010 when it was discovered that abstract geometric shapes put on the face together with a specific hairstyle — dubbed Computer Vision Dazzle or CV Dazzle for short — could render face-recognizing systems ineffective.

Extra challenge comes from artistic makeup, which can mimic realistic skin properties — scars, birthmarks, freckles — or imitate someone else’s appearance. Additionally, malicious actors can use facial occlusions, like glasses, as well as wigs, headwear, etc.

How to detect makeup presentation attacks?

A group of techniques is proposed to detect makeup attacks.

Since makeup presentation attacks to facial recognition are possible, a selection of countermeasures is proposed. Convolutional Neural Networks (CNNs) prove to be effective at detecting makeup attacks with a 93.88% accuracy. Makeup-removal with a Generative Adversarial Network (GAN) and a multispectral camera with the short-wave infrared electromagnetic spectrum range (SWIR) is also possible, although costlier.

Facial depth detection with a three-dimensional recreation tool and RGB data analysis is promising too: it compares recreated and captured images to reach a verdict. Finally, the Deep Tree Learning technique uses spoofing clusters to do image analysis and make a decision regarding a potential spoofing.

Do cosmetics influence facial recognition?

To an extent, makeup can fool facial recognition systems.

According to researchers from Ben Gurion University, facial recognition (FR) can be tricked by using makeup. During the experiment, two surveillance cameras operated by a Multi-Task Cascaded Convolutional Neural Network (MTCNN) were successfully spoofed.

Makeup has also long been used as a protest movement against facial recognition systems, as in the case with CV Dazzle or the AI-generated facepaint design used during the Qatar World Cup, with some people applying geometric figures to their faces for the purpose of concealing their identities. Even more conventional makeup is also believed to be a threat to FR, and as a result, various antispoofing methods — like Deep Tree Learning (DTL) — have been developed to address the issue.

What are the main makeup datasets?

The number of makeup spoofing datasets is limited.

The amount of public makeup datasets is rather scarce. Two most notable examples are YouTube Makeup Dataset (YMD) and Virtual Makeup Dataset (VMD).

A similar sample collection was designed at Eurecom. For it, numerous images were retrieved from various YouTube makeup tutorials. A unique aspect of this dataset is that the entire makeup application process is illustrated step-by-step.

Age-Induced Makeup database contains authentic and spoofing data. Makeup In the Wild Database presents a sample assortment obtained in unconstrained, real-life conditions. Makeup Induced Face Spoofing elucidates makeup impersonation techniques.

References

- Makeup Presentation Attacks: Review and Detection Performance Benchmark

- ID.me gathers lots of data besides face scans, including locations. Scammers still have found a way around it

- YouTube Makeup Dataset (YMU)

- Virtual Makeup Dataset (VMU)

- African Tribal Makeup - Africa Beauty Inspiration

- Theatrical blood by Wikipedia

- How to Create Wrinkles With Eyeliner: Inspired Beauty

- Video injection attacks on remote digital identity verification solution using face recognition

- Face Behind Makeup

- Automatic Facial Makeup Detection with Application in Face Recognition

- Can Facial Cosmetics Affect the Matching Accuracy of Face Recognition Systems?

- Facial cosmetics database

- Age-Induced Makeup database

- Makeup In the Wild Database

- Makeup Induced Face Spoofing (MIFS)

- Facial Cosmetics Database and Impact Analysis on Automatic Face Recognition

- Deep Tree Learning for Zero-shot Face Anti-Spoofing

- Vulnerability Assessment and Detection of Makeup Presentation Attacks

- PRNET.dlib

- BRISQUE

- ResNet

- Disguised Faces in the Wild

Antispoofing

Antispoofing