History of the Term

Deepfake (derived from ‘deep learning’ and ‘fake’) is a falsified synthetic media — video, photo, or audio — which presents a certain action that was not performed by a given person in reality. This technology uses techniques of deep machine learning and Artificial Intelligence (AI) to create fake media for falsification.

Deepfakes first appeared on the social news network, Reddit in 2017 with facial deepfakes being the predominant type. At the time, they were mostly used for purposes of entertainment and producing fake pornography. Today, deepfakes serve more often as a weapon of crime and malicious activities including blackmail, copyright infringement, reputation damage, spoofing attacks, etc. They were mentioned for the first time in a legal context in 2019 by the World Intellectual Property Organization (WIPO). The report stated that deepfakes pose a far more serious threat than just damaging intellectual property. The report further stated that if not detected in time and treated properly, deepfakes can produce lasting damage in financial, political and social domains as well as causing personal and psychological harm.

According to Idiap Research Institute modern facial recognition systems are highly vulnerable to deepfakes, with almost 95% of them being undetected by advanced systems.

Work Anatomy

There are three main types of a facial deepfake highlighted by experts:

- Face Swap

- Face synthesis

- Altered facial expression

Face swap

Face swap is one of the simplest deepfake techniques, as there are numerous "out-of-the-box" solutions to generate facial deepfakes. These solutions often include casual mobile apps available via official vendors such as Google Play and App Store. More notorious examples include Face swapping websites and apps, which can "insert" the face of any real person into a pornographic video. The website merely requires a target’s photo and a face swap can be generated with a single click. A similar method is used by seemingly innocent applications like Snapchat, Cupace, B612, Reface App etc. that may not intend any harmful purposes.

The technology behind face swapping is relatively simple. To produce a deepfake, an author employs deep learning together with two autoencoders that are trained by studying source and target faces: one is an original face and the other is its "replacement". Then Gauss-Newton optimization — used for solving nonlinear least squares problems that may occur in datasets with non-linear features — subtle face alignment, blending of the images fed to the network, and other techniques are employed to fine-tune the deepfake.

Although it is the easiest deepfaking method, face swap has some disadvantages. A deepfake, produced with a face swap, can display noticeable imperfections such as poorly timed eye blinking, distorted facial features, visual artifacts, slightly or strongly de-synchronized lip movement etc. A software like Adobe After Effects or a lip-synching tool developed at Stanford can be used to polish these flaws to some extent.

Face swapping method relies on opensource tools such as FaceSwap and Face2Face. The most difficult part of the technique is training the autoencoders. It requires gathering a massive dataset of video frames from both personas, which should be accurately cropped, so that the network can focus on their faces only.

Face synthesis

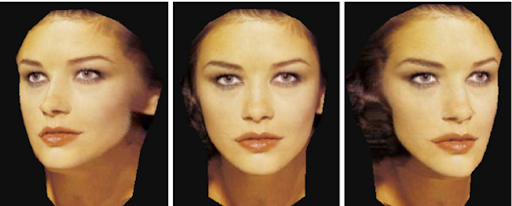

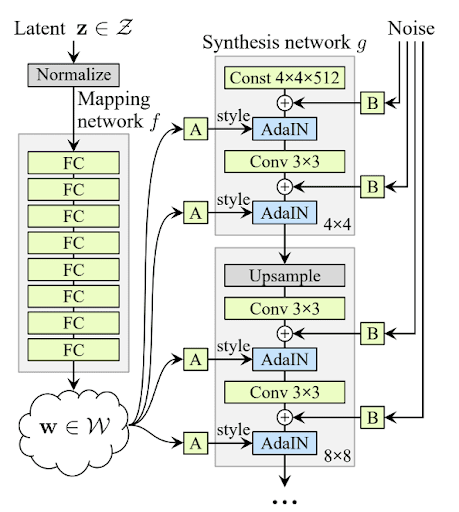

Face synthesis involves creating a highly realistic photo or video of a real or non-existent face. They are produced with the help of Generative Adversarial Network (GAN). The method requires learning high-level attributes like human identity. The visual data — photos of real people — goes through the convolutional layers with the help from adaptive instance normalization. At the same time, every convolution gets a "dose" of Gaussian noise, which is added to the input data in the form of random values. Ultimately, traces of this noise can help detect a deepfake.

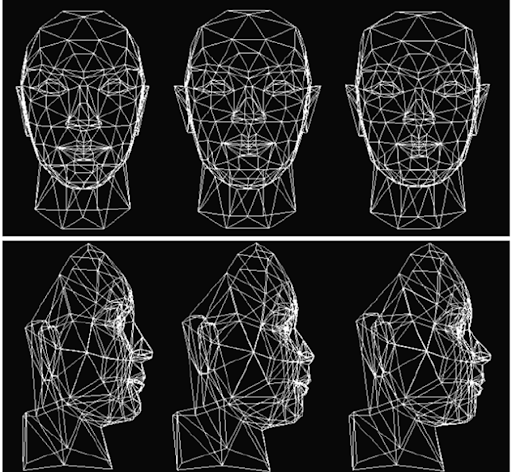

Face synthesis is a sophisticated process and requires a potential attacker to either have a high level of expertise or to outsource it to third-party experts, which is expensive. Modeling an artificial face from just a single image is also possible. For better results, this method requires a broad database of face geometry and texture. Using this method, a believable 3D image can be created and, if necessary, animated later. A similar technique is utilized by the MyHeritage app, which is capable of putting life in or animating a static, 2D image.

While being perceived as a potential threat, face synthesis can also be quite useful in the advancement of anti-spoofing technologies. It can be used to model different head positions, making facial recognition systems more reliable and effective in the future.

Altered facial expression

Altered facial expressions or face manipulation are able to add more realism to a falsified image or video. They are considered more as an additional component rather than an independent technique in deepfake.

Face manipulation is also based on GAN usage and can manipulate a face in different ways: changing facial expressions, age, gender, hair and eye color etc.

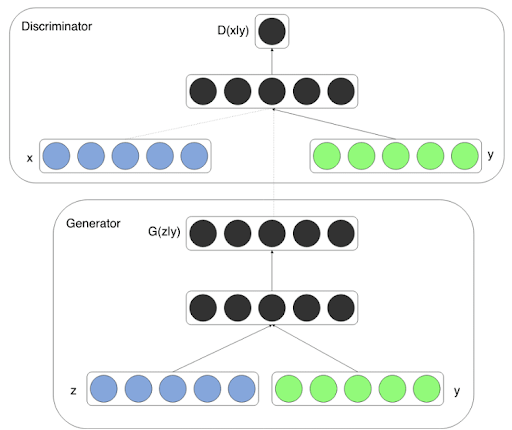

FaceApp is the most known example of a face manipulation done using the neural network. By utilizing a system of discriminators, generators and target domain labels, the network can basically "stitch" any emotion to a target’s face.

Currently, altered facial expression technology is in its embryonic stage and is used mostly for casual entertainment. However, it is anticipated that future advancements in this technology can take it up to a point where face manipulation can be performed in real time, similar to puppetry.

Examples

Numerous examples of deepfake videos are available on the web, and some of deepfake's subgenres — like falsified pornography — are even banned from major platforms.

One of the most famous examples of deepfake is a "speech" given by Barack Obama. In reality, this is an artfully staged deepfake, in which every aspect — from head movement to voice — is closely mimicked.

Another video features Mark Zuckerberg "frankly confessing" that Facebook is focused on manipulation of people. This video has far worse quality, with the target’s head losing its contour at times.

While the mentioned examples are comedic, some other deepfake variations demonstrate how dangerous the technology can be. An intentionally edited clip from a child safety film caused panic in India over child kidnapping concerns. Shared via WhatsApp, the edited video led to outbursts of hysteria and violence, resulting in a number of tragic deaths.

Mitigating Deepfakes

Deepfake has positioned itself as one of the major threats to security and facial detection systems today. According to Sungkyunkwan University, even the facial recognition systems of major corporations like Amazon (Azure Cognitive Services) can be fooled using deepfake. As their research showed, the Amazon’s API identified a deepfake celebrity image as a real one. This high plausibility can lead to devastating consequences, making way for crimes like identity theft, online extortion, impersonation fraud, and even stock market manipulation.

With the evolution of deepfake, countermeasures to combat it have advanced as well. For instance, face swapping can now be detected by using temporal sequential analysis. This method extracts deepfake frames from the video with the help of OpenCL. Then the temporal-based C-LSTM model can detect which frames were used for face swapping.

Facial recognition and deepfake detection systems have also improved to exclude a presentation attack featuring a deepfake. They utilize various methods like eye pupil movement tracking, infrared scanning, 3D cameras etc. — to distinguish between a real and artificially created face.

Another method to detect deepfaking uses AI: it focuses on analyzing phoneme-viseme mismatches. In other words, the AI analyzes lip movement and mouth shapes to detect discrepancies. If there are any discrepancies detected between them and the words pronounced by the deepfake — even if they are minute — the system will detect the video as a deepfake automatically.

So far, some of the low-quality deepfakes can even be detected with a naked eye. Usually, they reveal themselves when a target has a misshaped skull contour, too smooth or too wrinkly face, unnatural blinking, and misplaced shadows from glasses, nose, brows etc.

In 2020, a Deepfake Detection Challenge was hosted by Kaggle in collaboration with Facebook, Microsoft, AI Steering Committee and others with the aim of bringing recognition systems up to date with deepfake techniques. The challenge was hosted with a pool prize of $1 million and included 2,114 participants and 35,000 submissions. As it turned out, the best solution proposed showed an accuracy rate of 65.18 percent. (Against the black box dataset). A more detailed dataset of the challenge is provided by Cornell University. While the challenge seems to be a one-time event, it’s quite probable that it and others like it will be launched in one form or another in the future, as major issues caused by deepfakes still remain unresolved.

FAQ

Deepfake definition

Deepfake is a piece of synthetic media, in which a person's face in an image or recorded video is replaced with another person's face or likeness.

Deepfake is a term used for images, videos, and audios that were never actually taken or recorded. It refers to a completely falsified media forged with the help of sophisticated tools such as Generative Adversarial Networks (GANs), Convolutional Neural Networks (CNNs), extensive face databases, and so on.

Facial deepfakes partly belong to the category of digital face manipulation which includes techniques like identity swap, face morphing, attribute manipulations etc. With the emergence of mainstream and easy to use applications like FaceApp, Adobe Voco, DeepFaceLab or ZAO, creating deepfakes has become possible even for amateur users.

What is deepfake technology?

Deepfake technology encompasses a collection of techniques and tools used to synthesize fake media.

Deepfakes can be generated with numerous means: from sophisticated neural networks to simple mobile applications like Reface. While some deepfake tools are simple and available online, others require a high level of expertise and training.

Techniques to produce a realistic deepfake include Gauss-Newton optimization, image blending, usage of a Generative Adversarial Network (GAN), high-level attribute manipulation, and so on. One type of deepfakes is based on face swap technique (where one person's face in an image or video is replaced with another), while another type involves a completely new, synthesized face. The second type uses two competing networks: Generator G and Discriminator D that can produce highly realistic images.

When did the first deepfakes become widespread?

Deepfakes first became a public phenomenon in 2017.

According to antispoofing experts, deepfakes are not a novelty. The early deepfake tool Video Rewrite was developed in 1997. It could alter the lip movement of a target, adapting it to a different audio. An early example of a deepfake created using this method is thought to be one of J. F. Kennedy’s speeches created after his death.

The Deepfake phenomenon became widespread in November 2017 when a Reddit user under the nickname “deepfake” posted some falsified pornographic videos that employed a face swap technique, targeting prominent Hollywood actresses and singers. Deepfake proliferation sparked the need for reliable antispoofing tools.

Why are deepfakes potentially dangerous?

According to experts, deepfakes are the most serious AI threat yet to emerge.

In antispoofing, deepfakes are seen as a highly dangerous tool of deception and fraud. There are multiple ways to cause harm using falsified media. As deepfake tools evolve and become more advanced, it will be easier to orchestrate crimes ranging from money theft to corruption and sabotage of national institutions. Potential uses include defamation, reputational damage, blackmailing, online bullying, stock-price manipulations, smart system hijacking, etc.One of the biggest examples of deepfakes being used in a money heist occurred in the UAE: perpetrators stole $35 million by using a fake voice to access an account. To learn more about how experts are detecting synthesized audio, read our next article here.

References

- Synthetic Media, Wikipedia

- WIPO, Official website

- Deepfake videos easily fool face systems, researchers warn, Idiap Research Institute

- A horrifying new AI app swaps women into porn videos with a click, MIT Technology Review

- Text-based Editing of Talking-head Video

- Generative adversarial network, Wikipedia

- Face Synthesis, ResearchGate

- MyHeritage project, official website

- India WhatsApp 'child kidnap' rumours claim two more victims, BBC News

- Am I a Real or Fake Celebrity? Measuring Commercial Face Recognition Web APIs under Deepfake Impersonation Attack

- Deepfakes: temporal sequential analysis to detect face-swapped video clips using convolutional long short-term memory, SPIE

- A C-LSTM Neural Network for Text Classification, Arxiv.org

- Detecting Deep-Fake Videos from Phoneme-Viseme Mismatches

- Deepfake Detection Challenge, Kaggle.com

- Deepfake Detection Challenge Results: An open initiative to advance AI, Facebook AI

- The DeepFake Detection Challenge (DFDC) Dataset, Arxiv.org

Antispoofing

Antispoofing