Definition and Problem Overview

As new applications for AI continue to expand, so does the concern over its potentially questionable — or even dangerous — applications. AI Ethics is a new field in the area of Artificial Intelligence which addresses these moral issues raised by AI innovations. While AI adds to the toolkit of malicious actors who may use it for biometric spoofing or scams, there are also ethical issues inherent to the technology itself — racial and gender bias, discrimination, lack of transparency, and others.

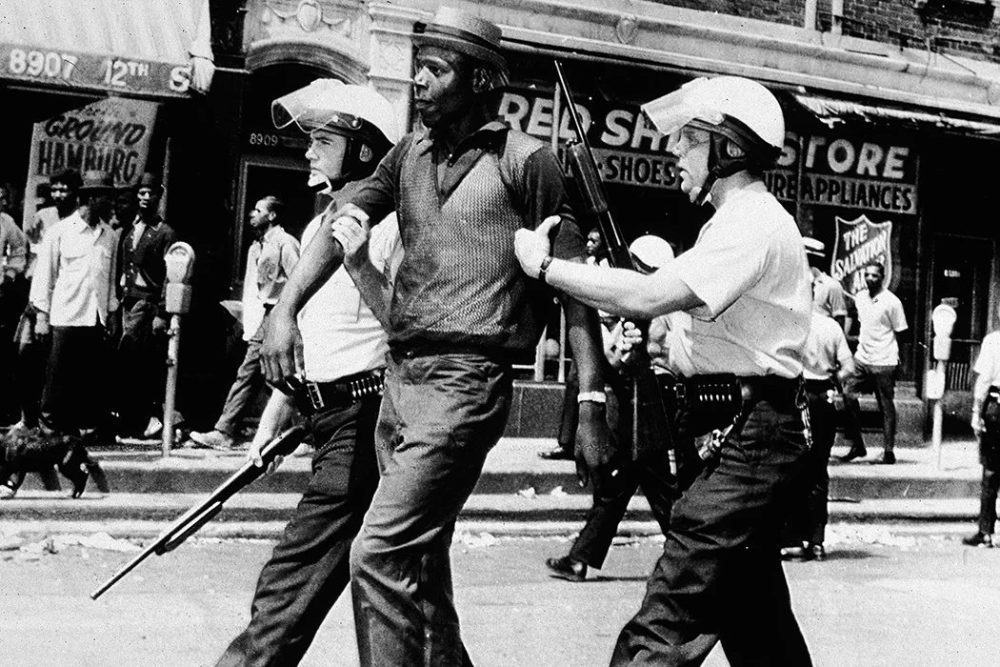

Even though issues posed by an advanced AI are a new social gauntlet, this is not the first time we have encountered ethical issues related to AI. In the 1960s, the Kansas City police employed a primitive AI-like system called the Police Beat Algorithm. Among its functions, it processed the racial and geographic data of individuals who had been arrested. As a result, it led to an unfair increase of surveillance and profiling against the black and brown communities who lived in marginalized areas of the city.

To avoid such mistakes of the past and face new challenges brought on by advancing technology, the concept of Ethical AI has been discussed and advocated for by AI experts. Academic writers, legislators, and whistleblowers have proposed ethical frameworks for AI technology, from Stuart Russell to Joy Buolamwini, Geoffrey Hinton, and others.

Ethical Problems Posed by AI

Due to its powerful nature, AI may cause dramatic consequences if used in the wrong way. There are at least three ways to turn artificial intelligence against the society:

- Nefarious use. This issue has already been on the rise in the real world: CEO fraud involving deepfake voices, disinformation spread, blackmailing, sextortion, and more.

- AI abuse. Though not necessarily intended for malevolent purposes, the technology of AI itself poses a threat. For example, online users can easily train a Large Language Model (LLM) to write insults, spread misinformation, or create incendiary content. Another scenario, given in a report, shows children forcing a driverless car to slow down in their presence for fun — which can result in passenger annoyance, decreased service demand, and possible fatalities.

- Malevolent AI. A bad actor designing a malevolent AI on purpose is also an unsettling possibility. Such an endeavor may come from petty criminals and unscrupulous researchers, or even a suppressive government.

Another scenario, even though dystopian, is avidly discussed by the Swedish philosopher Nick Bostrom, who considers a probability of an AI growing overly independent. As the technology advances, AI may develop its own goals and criteria that will be at odds with human moral values.

The Main Ethical Concepts Used in AI

Confining AI within a philosophical framework may keep AI within moral boundaries and prevent those scenarios. Among them are:

- Utilitarianism

A teaching developed by Bentham and Mill, it explains that all human action is dictated by a desire to receive pleasure and avoid pain/suffering. The goal is to maximize pleasure while reducing human suffering. However, utilitarian philosophy may be too “cold and calculating” of a framework to impose on an AI which does not possess the nuances of human emotion and critical thinking. For example, an AI may authorize euthanasia too easily, arbitrarily manage someone else’s finances, prioritize patients, etc.

- Deontology

Deontology is a normative theory which defines the abstract good by a set of rules a person is willing to follow. Some examples would be the Ten Commandments in Christianity, Mitzvot in Judaism, Four Noble Truths in Buddhism, and others.

In the context of AI, deontology implies that norms of moral conduct can be programmed inside an artificial model from the start. Indirectly, this concept overlaps with the Three Laws of Robotics penned by Issac Asimov which forbids robotic entities from hurting or disobeying humans. A similar idea is expressed by Nick Bostrom, who devised the Motivation Selection methods that limit AI in its ambitions, goals, and intentions.

- Stoic virtues

Stoicism is a philosophy that promotes self-control and fortitude as characteristics to prevent destructive behaviors. Though AI of course does not have “self-control,” an interesting concept from research professor Gabriel Murray suggests that AI can be taught the Four Stoic Virtues:

- Temperance. AI’s capability to restrain itself.

- Justice. AI should refuse to mislead a human.

- Wisdom. Flexible, innovative, and commonsense thinking.

- Courage. Willingness to sacrifice itself for the sake of higher good.

By adopting these virtues, AI can also practice approval-directed behavior, meaning that its actions would be predisposed by the values that “its overseer would highly rate.” Humans are imperfect, but computers are capable of perfection – perhaps even capable of achieving the “sage” status of virtue revered by stoic philosophers.

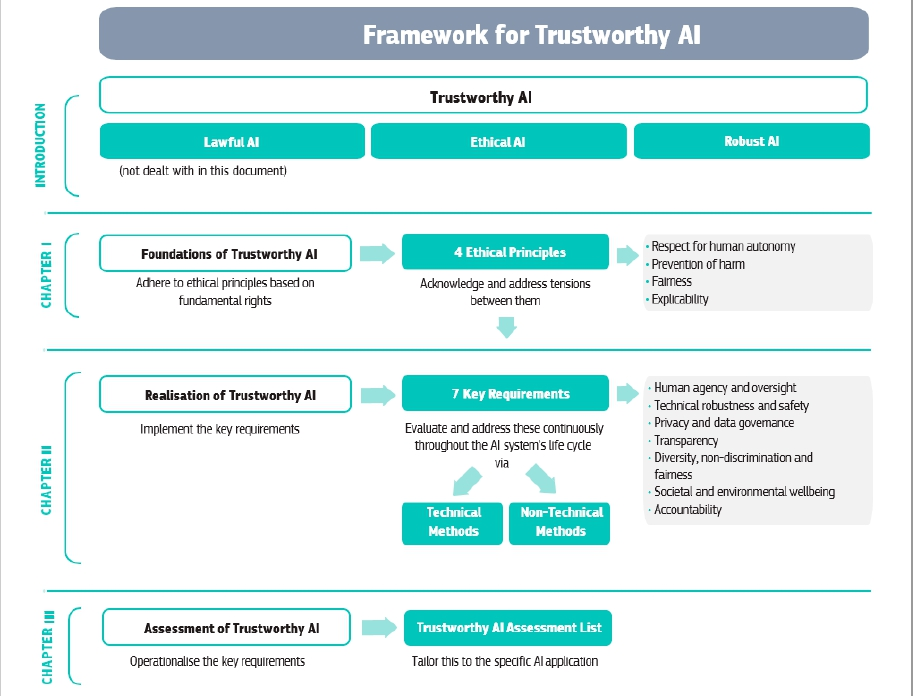

Ethics Guidelines for Trustworthy AI

The Ethics Guidelines developed by the European Commission is, perhaps, the first document that details moral components for an AI design. It combines some elements of the aforementioned philosophical schools of thought to propose ethical principles drawn from basic human rights – especially the right to protection for both individuals and groups.

These ideas include respect for human dignity, freedom of the individual, equality and non-discrimination, and other essential boundaries. Some topics covered by the guidelines are:

- Prevention of harm. No harm can be produced toward users.

- Fairness. Users should be protected from bias and stigmatization.

- Explicability. Decisions, algorithms, and goals of an AI should be explained.

- Respect for human autonomy. AI solutions cannot deceive or manipulate users to their advantage.

Essentially, these principles are legal-binding in the EU’s territory with the introduction of the AI Act.

AI Ethics Standardization and Documentation

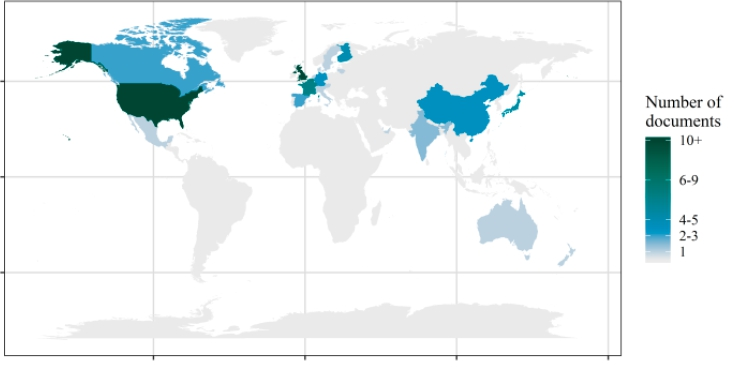

In August 2018, an ambitious effort was initiated to codify all existing documents dedicated to AI-related ethics. En masse, 112 documents were selected and analyzed to identify patterns related to the topic.

An interesting correlation showed that the private sector rarely touches on the problem of mass unemployment that AI could potentially cause — this is mostly discussed in the papers published by NGOs. This may indicate that private companies are evading responsibility on this issue.

While moral topics are eagerly discussed, other themes are ignored: the human-robot relationship, existential threats, cultural sensitivity, the psychological impact left by the technology, and other important themes are overlooked in the ethics discussion.

Ethical Metrics

There’s an ongoing argument over how exactly an AI can be recognized as moral. Research brings up the famous trolley problem authored by the philosopher Phillipa Foot in the context of self-piloting AI. In other words: which direction would AI take if it faced a moral dilemma?

To assess its morality, the paper suggests 10 criteria:

- The term “ethics” should be replaced with the more measurable term “values.”

- Developers should explain how their solution aligns with those values.

- AI’s data and code should be mandatorily requested for a review.

- Funding sources should be explicitly named.

- Internal review transparency is crucial.

- AI’s capabilities and limits must be clarified.

- Internal Review Boards (IRBs) should be established for the AI sector.

- Scientists from other areas must be motivated to research AI as well.

- Social awareness related to this technology should be raised.

- AI authors and journalists must abstain from sensationalist PR-stunts when presenting technologies.

Together, these requirements are referred to as benchmarking ethics.

Modeling Moral AI

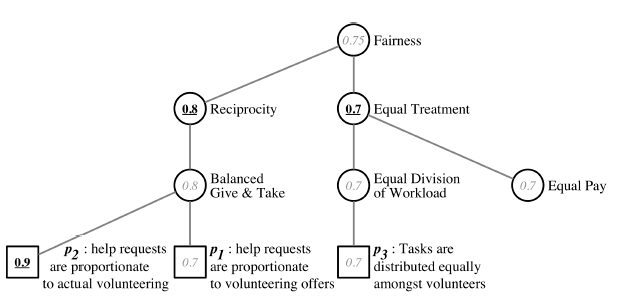

To make an abstract phenomenon of a “moral value” more quantifiable, a computer model was created which represents a value taxonomy as a directed acyclic graph composed of nodes. In turn, these nodes represent value concepts, relation among which is expressed through the set of edges.

Another way to teach an AI about ethical boundaries may be through direct training, which has been implemented via the Moral Machine project. On this platform, visitors can provide actual feedback judging how acceptable from the moral point of view are the decisions that AI makes.

Value Alignment Problem

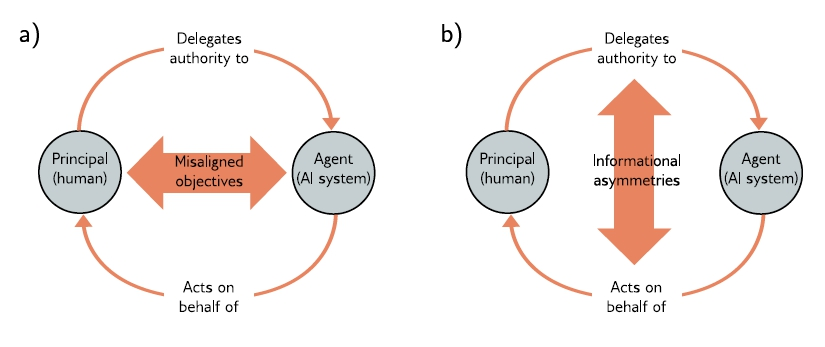

In light of AI ethics, values are goals or objectives that this technology can have. Value alignment is a concept that serves to adjust AI’s values to the human needs for safety, efficacy, and morality. This can be problematic due to unpredictable functioning of an AI in a chaotic environment – such as the real world – which can cause deviations from its intended modus operandi.

It is suggested that algorithmic fairness should be implemented along with artificial moral agents — in other words, independent AI systems that are capable of making decisions regarding ethics.

Limitations of Moral AI and Future Solutions

Artificial Intelligence is progressing faster than these frameworks can be applied. More challenges will doubtlessly pop up as new architectures are made, and as new regulatory initiatives are introduced.

It’s critical that there is proper scientific and social communication to meet this growing demand for AI moral foundations. As AI technology pushes forward, AI experts must consider diverse views on its development, strive for transparency, avoid exaggeration of AI’s abilities and unneeded anthropomorphism, and promote the importance of the ethical AI concept among students, researchers, sponsors, and corporations.

One of the rapidly-advancing fields of AI is Large Language Models (LLMs) which are the foundation for chatbots like ChatGPT. To read more about LLMs and their potential for abuse in spoofing, check out this article.

Antispoofing

Antispoofing