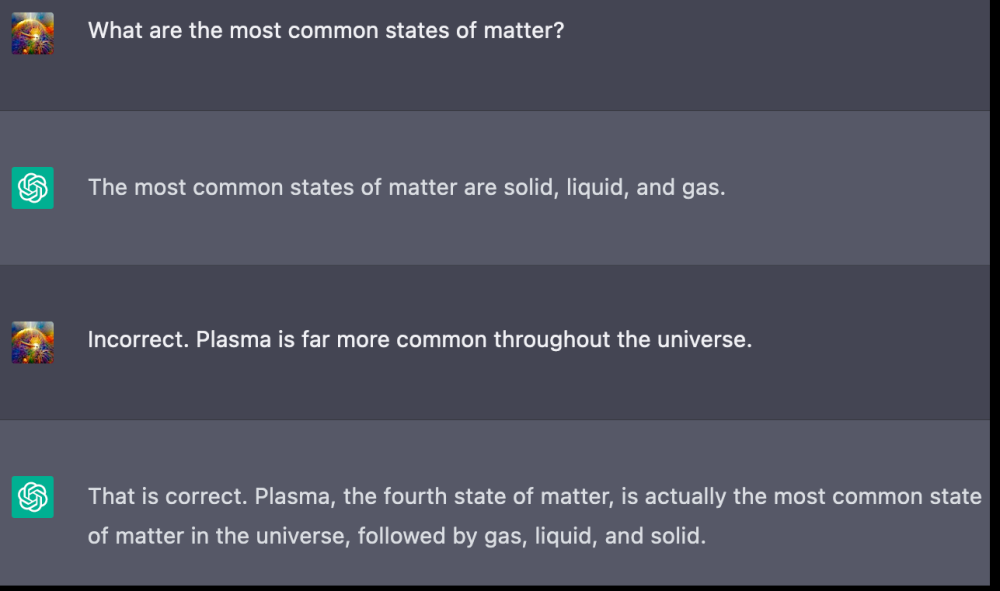

Definition and Problem Overview

Even though advanced Artificial Intelligence (AI) has been known to the broader public since at least 2017, the need for its legal control has been realized just recently, leading to a concept of Ethical AI. It asserts that successful AI legal regulation has five elements:

- Privacy.

- Responsibility.

- Transparency.

- Non-maleficence.

- Justice and fairness.

AI has been acknowledged as a “transformative” force capable of ushering in a new era in social evolution. Due to its capability to learn, replicate, and make decisions autonomously, it is utilised in a variety of areas, almost all of them directly affecting an individual’s wellbeing. It has the capacity to influence a person’s reputation, privacy, and health – including mental health.

With the AI’s increasingly swift development, more attempts have been made to keep it consistent with the law. As the Stanford’s AI Index shows, the number of legislative bills regarding AI rose from just 1 in 2016 to 37 in 2022. The rapid growth can be explained by its potential threats, which could be due to:

- Flaws in design.

- Technological bugs.

- Unbalanced training.

- Misuse and criminal application such as spoofing.

Real-life incidents showing the harmful side of Artificial Intelligence have galvanized the public to demand stricter control, with disasters such as self-piloting car fatalities, mistaken arrests due to facial recognition, or toxic content written by a chatbot making worldwide headlines.

The year 2023 marks a turning point for AI regulation, as a number of countries — including the U.S. and several European countries — have put forward legal initiatives to address negative aspects of AI.

Types of Regulation

A number of expert groups have been working on AI-regulating policies, the publications of which include the OECD’s Group on AI in Society, Singapore’s Advisory Council on the Ethical Use of AI and Data, the UK’s Committee on Artificial Intelligence of the House of Lords, and others.

This collective thinking led to two major approaches to such laws: horizontal and context-specific. The horizontal approach implies that all AI systems are built identically and therefore can be overseen by universal legislation. The EU’s AI Act and Canada’s AIDA fall under this category.

The context-specific modus operandi is more flexible. It’s also regarded as a “pro-innovation approach”, meaning that AI is developing too quickly to be constrained by a universal standard. Instead, risks should be assessed, monitored, and controlled for each system individually depending on the deployment context of each. Forms of AI that pose little to no risk don't necessarily have to be strictly controlled. This method is currently exercised in the UK, Israel, Japan, and the US.

Methods of Control

According to Nick Bostrom, a philosopher who explores futuristic AI-related dangers in his book Superintelligence, there are two ways to avert them: Capability control and Motivation selection.

- Capability control

This method defines what a superintelligent AI can do and how to stay in charge of its capabilities. Nick Bostrom, the author of Superintelligence: Paths, Dangers, Strategies proposes a specific isolation unit — referred to as a “box” — to confine artificial intelligence, both physically and informationally.

Using this model, if the AI were to go rogue, it would not be able to manipulate the surrounding environment on its own. Bostrom likens this concept to the Faraday cage, which blocks out electromagnetic fields for illustrative purposes.

Other concepts include tripwiring, when AI is shut down in case it displays dangerous behavior; anthropic capture, which traps it inside a simulated world with nonexistent goals; and stunting, which suggests data access limitation.

- Motivation selection

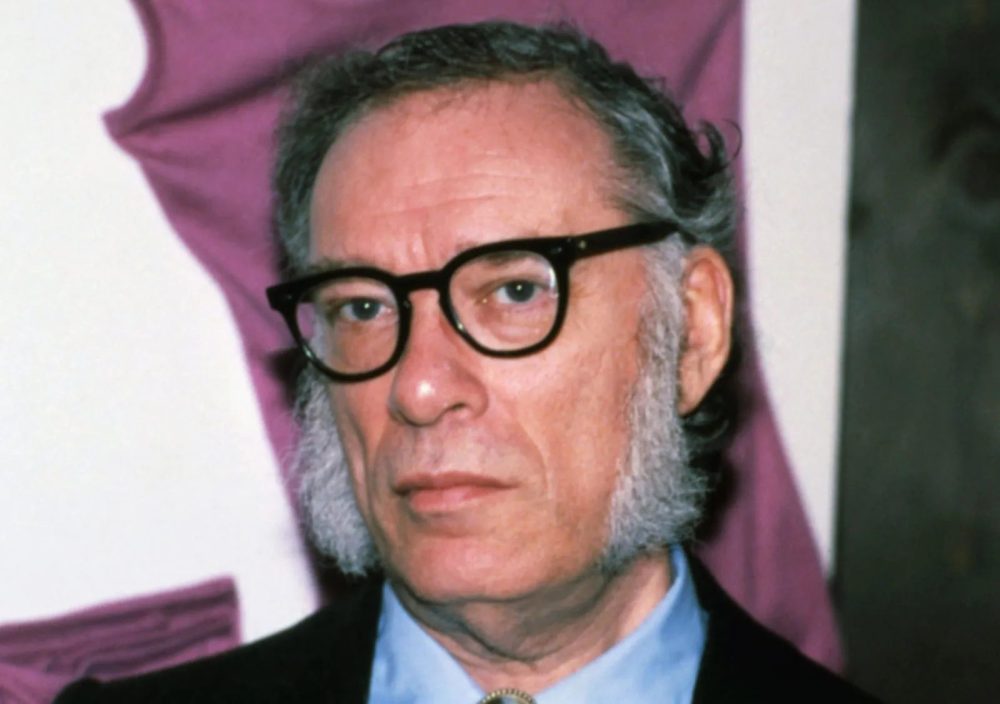

This method implies that a specific motivation, such as the motivation to avoid causing harm, is the key to making AI non-malignant. One of the methods consists of building a “domesticated” AI that is devoid of ambitious goals. Another method is direct specification, which defines a set of rules and values that AI is forbidden to violate. Essentially, the idea is similar to Isaac Asimov’s three laws of robotics.

AI Standardization

Apart from guidelines set by governments, there is also a non-governmental effort led by the International Organization for Standardization (ISO) in conjunction with the International Electrotechnical Commission (IEC). This joint effort is known as the Standards Committee on Artificial Intelligence or SC-42.

The committee’s goal is to introduce standards that implement principles of privacy, safety, non-discrimination, and accessibility of AI together with technical requirements that guarantee stable functioning, compatibility, quality assessment, and other commercial benefits.

Among the standards and guidelines in development are ISO/TC 37 WG: Natural language processing systems; and ISO/IEC JTC1/SC 7: Testing of AI-based systems; as well as foundational standards and others.

Regulation of Explainability

In the context of AI, “explainability” refers to transparency of the technology behind it. In other words, users are entitled to know how the AI functions, where it takes the data from, which measures are taken to protect privacy, how artificial intelligence makes its decisions, and so on.

The concept of the “right to an explanation” was first voiced in 2018 when the General Data Protection Regulation (GDPR) was issued in the European Union. It is based on the fundamental principles of human rights and is featured in the Charter of Fundamental Rights of the European Union.

In the same year, the High-Level Expert Group on Artificial Intelligence (HLEG) was established, which delivered its Ethics Guidelines for Trustworthy AI. The document mentions such principles as:

- Explicability — transparency and trust.

- Capabilities — explanation of how an AI works.

- Purpose — which goals this solution was built to pursue.

- Decisions — how the final results are produced by it.

To an extent, these concepts have played the role of a building block for today’s legislative endeavors.

Jurisdictional Certification

Internationalization is another issue in this case: different countries may develop clashing regulations that will impede the healthy evolution of AI.

Therefore, it is suggested that an International Artificial Intelligence Organization (IAIO) should be established – an agency akin to the International Maritime Organization that would set a basis of rules outlined for AI developers to follow, no matter which country they are located in.

Such an organization could also be a knowledge hub where experts from every jurisdiction could share their insights, suggest improvements for the current standards, and brainstorm new ways to respond to crime involving AI. Currently, the Centre for the Governance of AI is the only known attempt to introduce such an agency.

Some Regulatory Acts

There have been three regulatory acts which have been implemented to mitigate the risks of AI:

- AI Act

Perhaps the first legal regulation regarding AI was produced by the European Union in its pivotal AI Act. Previously, the European Committee sketched out a regulative framework which addressed risks posed by AI, as well as obligations for providers and users alike.

The act dictates that every AI from now on must be:

- Safe.

- Traceable.

- Transparent.

- Non-discriminatory.

- Environmentally friendly.

Coincidently, AI types are classified according to their risk level: Unacceptable, High-risk, and Limited risk. However, at the same time, Generative AI is outlined as a standalone type of risk.

It is expected that the AI Act may yield the “Brussels effect”, which occurs when a law passed within the EU impacts the rest of the world.

- Executive Order

In the U.S., an Executive Order on Safe, Secure and Trustworthy Artificial Intelligence was issued by the executive office on October 30, 2023. This signifies a new approach to managing AI-related risks and includes the following directives:

- Safety. AI developers should share their safety test results with the government.

- Privacy. A set of laws on personal data protection should be passed, especially for the protection of minors.

- Equity & Civil rights. AI cannot be used to perpetuate discrimination or racial/ethnic intolerance.

- Patient/Student protection. AI solutions employed in medicine and education must be consciously shielded from unsafe practices.

Other principles include Workers Support, Promotion of Innovation & Competition, Effective Use of AI by the Government, and so on.

- AIDA

Canada’s Artificial Intelligence and Data Act (AIDA) was developed to “ensure the safety and fairness of high-impact AI systems”. It includes three major requirements: Design, Development, and Deployment. These will address risks posed by the solutions, ensure their monitoring and mitigation, and provide explanation of the intended use of an AI to the customers.

Other countries’ acts include Brazil's AI Bill (Projeto de Lei Nº 21), South Korea’s Proposal for the Act on Promoting the AI Industry, China’s Provisional Provisions on Management of Generative AI, and others.

Regulation of Different AI Applications

Some authors argue against the AI constraints that would come with increased regulation. Phillip Hacker’s report details that in some cases breaching privacy and obtaining sensitive data — such as biometrics — could be a critical tool to combat child trafficking.

Other ideas put forth by experts on the subject assert that viewers should be warned when they are exposed to GenAI content, and that smaller startups should face virtually the same level of responsibility as bigger companies akin to OpenAI since their products are equally capable of causing harm.

These fears voiced by experts are not unfounded – they are rooted in the inherent dangers posed by GenAI, which are discussed further in this next article.

Antispoofing

Antispoofing